The tactic lets the attacker specify which face the categoriser should "see" and apparently the researchers could convince the cameras that they were looking at the musician Moby or the Korean politician Hoi-Chang. Quite what the researchers had against Moby is unknown, we guess they didn't like his last album.

Their experiment is based on finding blind spots in machine learning models that can be systematically discovered and exploited to confuse these classifiers.

The gadget used in their attack is not readily distinguishable from a regular ball-cap, and the attack only needs a single photo of the person to be impersonated to set up the correct light patterns.

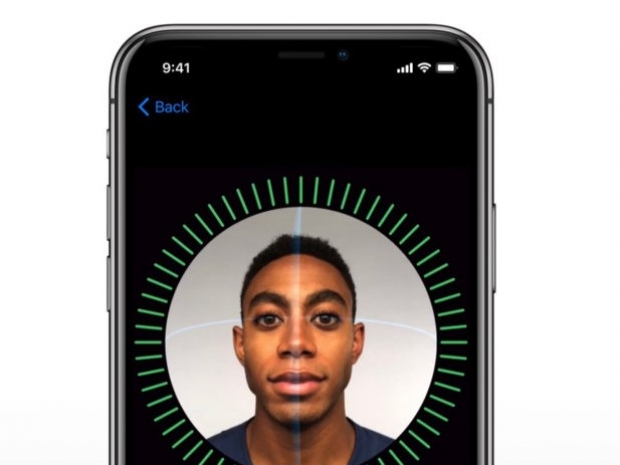

In this paper, the researchers discovered that infrared could be used by attackers to either dodge or impersonate someone against machine learning systems.

"To prove the severeness, we developed an algorithm to search adversarial examples. Besides, we designed an inconspicuous device to implement those adversarial examples in the real world. As showcases, some photos were selected from the LFW dataset as hypothetical victims. We successfully worked out adversarial examples and implemented those examples for those victims. What’s more, we conducted a large-scale study among the LFW dataset, which showed that for a single attacker, over 70 percent of the people could be successfully attacked, if they have some similarity", the report said.

"Based on our findings and attacks, we conclude that face recognition techniques today are still far from secure and reliable when being applied to critical scenarios like authentication and surveillance. Researchers should pay more attention to the threat from infrared", the report added.

This is bad news for Apple users and all those companies which are thinking of copying it. To turn over the facial recognition software all that would be needed is a picture of the phone's owner and an LED light.