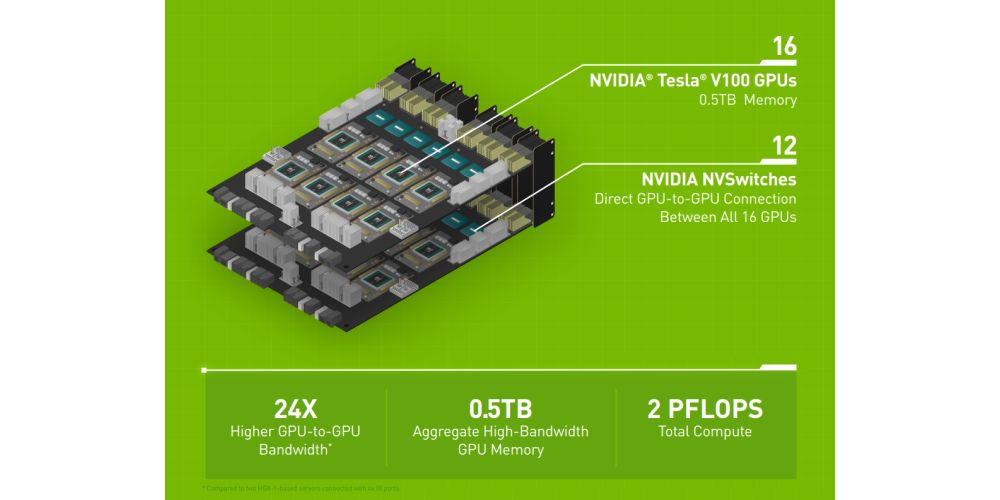

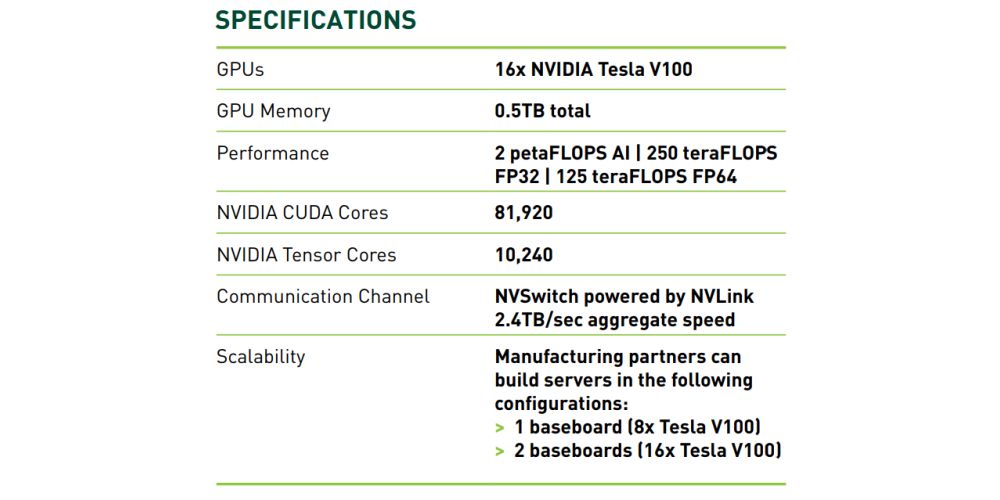

The platform mixes 16 Tesla V100 graphics cards, which work together to create a giant virtual GPU with half a terabyte of GPU memory and two petaflops of computing power. This is achieved using Nvidia NVSwitch interconnect fabric technology, which links the GPUs together to work as a single GPU.

Talking to the assorted throngs at Nvidia’s GTC (GPU Technology Conference) event in Taiwan, CEO Jensen Huang said the system will not be coming to your local PC as it is designed for high-precision calculations using FP64 and FP32 for scientific computing and simulations, while enabling FP16 and Int8 for AI training.

Huang said: “The world of computing has changed. CPU scaling has slowed at a time when computing demand is skyrocketing. NVIDIA’s HGX-2 with Tensor Core GPUs gives the industry a powerful, versatile computing platform that fuses HPC and AI to solve the world’s grand challenges.”

According to Nvidia, the HGX-2 has achieved record AI training speeds of 15,500 images per second on the ResNet-50 training benchmark, and is powerful enough to replace up to 300 CPU-only servers.

According to Paul Ju, vice president and general manager of Lenovo DCG: “NVIDIA’s HGX-2 ups the ante with a design capable of delivering two petaflops of performance for AI and HPC-intensive workloads. With the HGX-2 server building block, we’ll be able to quickly develop new systems that can meet the growing needs of our customers who demand the highest performance at scale.”

GPU stats

The HGX-2 is powered by the Nvidia Tesla V100 GPU, which comes equipped with 32GB of high-bandwidth memory to deliver 125 teraflops of deep learning performance. Combining 16 of those GPUs together is going to produce some excellent results.

“Every one of the GPUs can talk to every one of the GPUs simultaneously at a bandwidth of 300 GB/s, 10 times PCI Express”, Huang said. “So everyone can talk to each other all at the same time.”