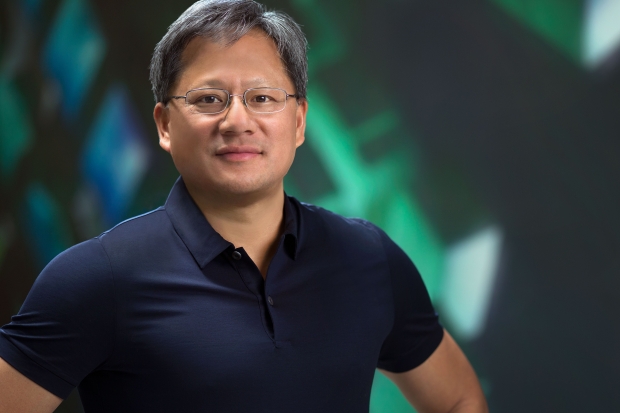

Shah, who works Japanese financial holding company Nomura, seemed to be rather enthusiast about Nvidia’s data centre business after his chat. We expect that Huang must have broken out the chocolate biscuits, analysts are not used to that.

Huang apparently was animate about the prospects for the data centre business, as hyperscale companies quickly adopt throughput computing in an effort to accelerate workload performance.

Nvidia’s year-on-year data center revenues grew by 63 percent last quarter, mostly due to the “broad adoption” of Tesla M40 GPU accelerator. Nomura believes the company has an 80 percent share of the accelerator market, which accounts for less than one percent of overall data center spending.

One product name dropped included the Tesla M40 GPU was designed for machine learning, and features 3,072 CUDA cores and 12GB of GDDR5 memory, with up to seven Teraflops of single-precision performance.

Nvidia’s theory is that the accelerator can deliver eight times more compute than a traditional CPU..

The vision is more or less what Huang has been banging on about for a while. Machine learning is great, we have no idea how great but almost no transaction with the Internet will be without deep learning or some machine learning inference in the future.

Huang said earlier this year: “There’s no recommendation of a movie, no recommendation of a purchase, no search, no image search, no text that won’t somehow have passed through some smart chatbot or some machine learning algorithm so that they could make the transaction more useful to you.

Shah also sees continued deal making by Huang in the automative market, as its chips help with auto-pilot and eventually self-driving functions: “We expect auto-pilot engagements, which increased from 5 to over 80 through FY16, to drive automotive revenue late in CY16.”