Taking inspiration from Marvel’s Iron Man comic book series, he named his assistant Jarvis and began coding using Python, PHP and Objective C, using hints from some recent machine learning advancements in natural language processing, speech recognition, face recognition and reinforcement learning. The ultimate goal of the project was to evaluate the present state of AI technologies – “where we’re further along than people realize and where we’re still a long ways off,” he said.

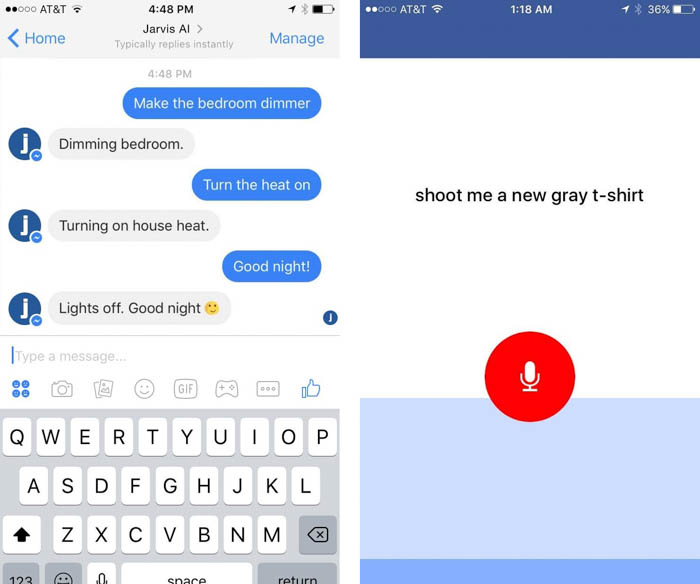

On the surface, Zuckerberg’s custom Jarvis sever box is similar to many do-it-yourself home automation setups capable of dimming lights, adjusting temperature, switching on appliances or adjusting home theater controls. But most of these devices don’t necessary cooperate right out of the box, and he admits that the most complicated aspect of the project was “simply connecting and communicating with all of the different systems in my home.”

Reinforcement learning strengthens automated responses

The goal was to have Jarvis understand natural language requests and respond to them as if interacting with another human being. This required it to learn the behaviors, actions and lifestyle habits of each individual user, including both Zuckerberg and his wife Priscilla Chan.

For example, if the request “play some light music” was made, Jarvis would check the user’s previous playlist history and adjust the tone automatically, similar to Amazon’s Alexa assistant but with subtle differences. Then came the more complicated requests related to the same artist, such as “play someone like you,” “play someone like adele,” or “play some adele.” For the system to distinguish and learn these differences, it uses positive and negative feedback reinforcement to strengthen its responses.

“I had to reverse engineer APIs for some of these to even get to the point where I could issue a command from my computer to turn the lights on or get a song to play,” he says. This included manually coding in cross-compatibility for a Crestron light system, Samsung TV, Nest Cam, Spotify, and a Sonos wireless speaker system.

Facial recognition at the doorstep

One of the niftier features in the Jarvis system is its ability to recognize faces of individuals ringing the doorbell. Zuckerberg installed a few cameras at his front door to capture images from all angles, then attached them to a server that continuously tracks for any guests appearing in view. When an individual’s face is detected, the server runs a face scan to identify the person, and then checks a custom list to determine whether he is expecting that person’s arrival.

Voice commands are most efficient control method

Of course, not every automated task is going to be simpler than its manual counterpart. The Wirecutter gives the great example of turning off a connected lightbulb, in which a user is required to locate their smartphone, unlock it, scroll to the lighting app and open it, select the bulb, and then input the command. In this situation, voice activation may be a much quicker alternative that lowers the unnecessary complexity of attempting to make appliances “smarter.” This is where frameworks like Apple HomeKit, Google Home, Samsung SmartThings and Stringify allow users more seamless interaction with compatible devices.

Source: Facebook

With the results of his Jarvis project, Zuckerberg admits the same conclusion.

“Even though I think text will be more important for communicating with AIs than people realize, I still think voice will play a very important role too,” he says. “The most useful aspect of voice is that it's very fast. You don't need to take out your phone, open an app, and start typing -- you just speak.”

To enable voice recognition, Zuckerberg created a dedicated Jarvis iOS app to continuously listen to him throughout the day, saying he plans to build an Android version soon as well. The dedicated app sits on his desk and listens to his voice patterns and expressions, similar to a voice recorder but with processing done by algorithms very similar to Amazon’s Echo. He notes that he wants to send requests to Jarvis more when he is away from home, so it was important to build a mobile app to allow for remote functionality.

“In a way, AI is both closer and farther off than we imagine,” he says. “AI is closer to being able to do more powerful things than most people expect -- driving cars, curing diseases, discovering planets, understanding media. Those will each have a great impact on the world, but we're still figuring out what real intelligence is.”