According to Tom's Hardware, Nvidia’s new technology uses an AI deep neural network to analyse existing videos and then apply the visual elements to new 3D environments.

While Nvidia claims this new technology could provide a revolutionary step forward in creating 3D worlds there are still a few problems.

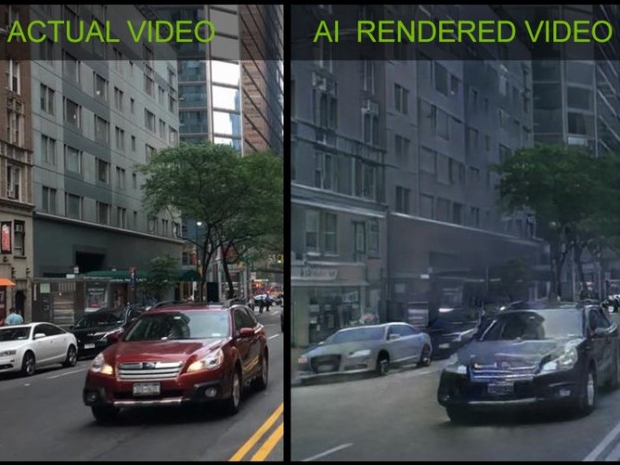

While the tech allows AI models to be trained from video to automatically render buildings, trees, vehicles, and objects into new 3D worlds, instead of requiring the normal painstaking process of modelling the scene elements the results are still pretty pants.

The image on the right, which was generated in real time on a Nvidia Titan V graphics card using its Tensor cores. The result is something like a Stalin tone colour TV.

Its one advantage is that because it generates the scenes in real time it looks much better when you see the output in a video.

Nvidia's researchers have also used this technique to model other motions, such as dance moves, and then apply those same moves to other characters in real-time video.

This means that altered videos like deep fakes and those of a Trump press conference will be much better and harder to spot as fakes.

Nvidia cautions that this isn't a shipping product yet. The company did theorise that it would be useful for enhancing older games by analysing the scenes and then applying trained models to improve the graphics.

It could also be used to create new levels and content in older games. In time, the company expects the technology to spread and become another tool in the game developers' toolbox.

The company has open sourced the project, so anyone can download and begin using it today, though it is currently geared towards AI researchers.