Vega 7nm or Vega 20 as AMD used to call this GPU on its roadmap a while ago, naturally increased the performance in deep learning and artificial intelligence but at the same time adding 32GB of HBM 2 made it too expensive to launch as a gaming part. This is a "we told you so" moment.

The original Vega 64 and 56 only made profit as the market went crazy for any hardware suitable for cryptocurrency mining. Since this interest wave seems to be over, AMD could not bet on making let’s say, a 16GB HBM 2 Vega 7nm gaming part, as the BOM would still be too expensive.

7nm has massive advantages

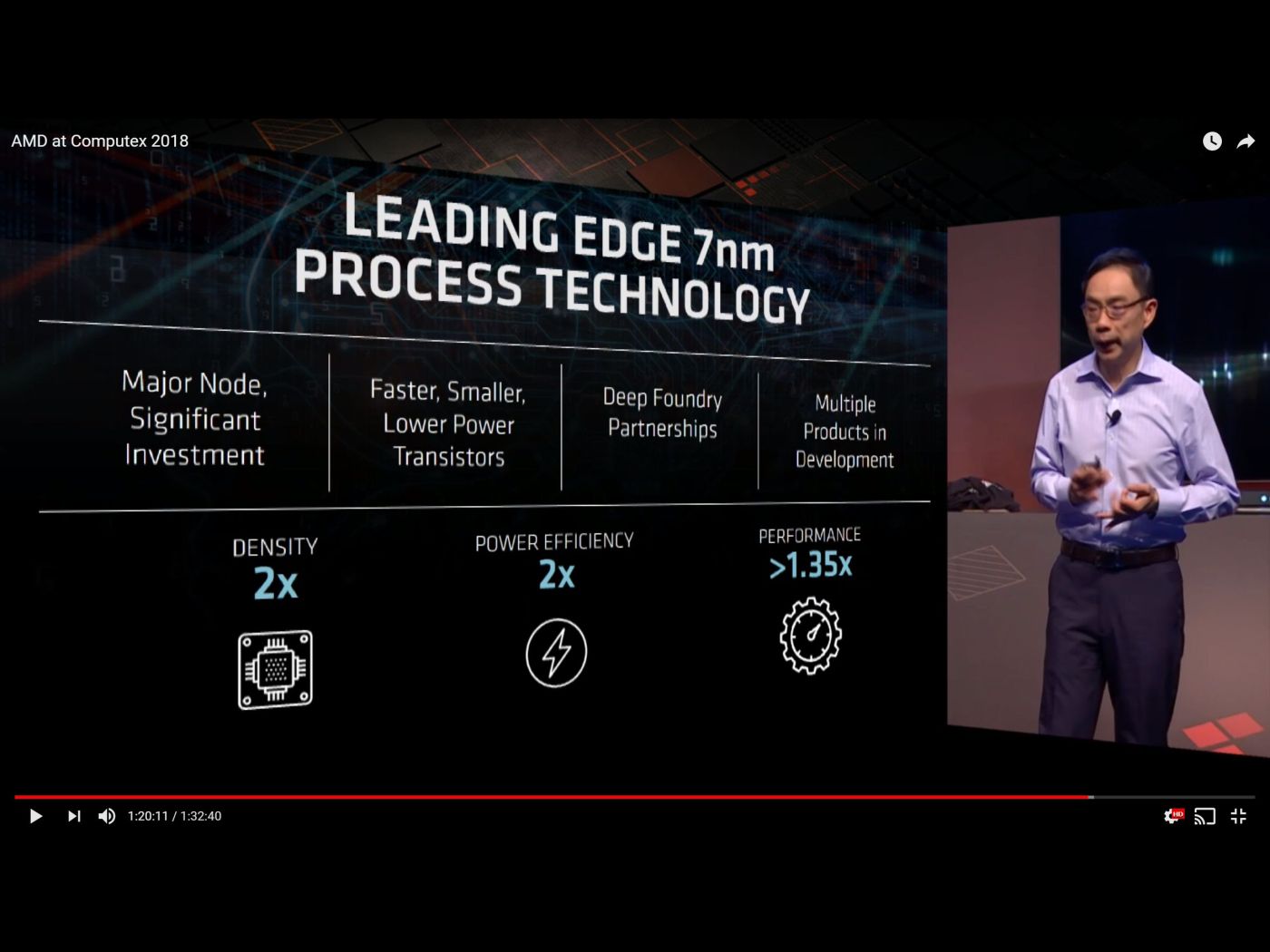

David Wang SVP of Engineering at AMD, an ex-Synaptics chap who took over after Raja left has, went into a few more details about Vega 7nm. David said that a new 7nm has twice the transistor density at the same surface. This was not really a surprise but AMD claims that these new transistors are now two times power efficient compared to the last 16nm technology. AMD still calls these goals, so it is not set in stone.

It is kind of weird that AMD compares 7nm versus 16nm as Vega 10 or Vega 64 brand was launched as 14nm GlobalFoundries part. It is all marketing, so it doesn’t need to make real engineering sense.

When it comes to performance, AMD claims that the new 7nm part offers 35 percent uplift from 16nm Vega parts. The 7nm unnamed foundry which is behind the 7nm Vega was described as the one AMD has deep foundry partnerships with (plural). So, AMD will continue to flirt with both GlobalFoundries and TSMC.

AMD has multiple products in 7nm in validation and sampling stage.

Vega Instinct 7nm is optimized for server and workstation compute, high speed interconnect - infinity fabric, hardware based virtualization and new deep learning ops. The emphasis is on performance power and density advances over the previous parts.

Infinity fabric is a differentiating factor according to Wang, but then again Nvidia has Nvlink that works really fine in IBM’s PowerPC 9 series environment.

Deep learning advancements should increase the performance or train and inferencing. Vega instinct 7nm will be optimal for data center and workstation applications.

AMD is betting on its Open software ecosystem for machine learning and going against Cuda. Needless to say that AMD is really late to this game as Nvidia has been locking developers to its way of doing AI and ML with Cuda for more than nine years now. ROCm (Radeon Open Ecosystem) is complete solution ready today. The solution supports Google’s TensorFlow, Pytorch, Caffe2, Caffe and MXnet frameworks and it is optimized for common libraries.

AMD can help acceleration in image and video speech as well as natural language processing training without writing a single line of Cuda. These were David’s words.

Ray Tracing demoed

AMD supports open source GPU Ray Tracing Render, a rendering solution for GPU Raytracing.

There is a Radeon Rays library available and AMD is adding a Machine Learning rendering/hybrid rendering capability as well as cloud rendering. This might be a available at later date, but not today.

The solution is claimed to be fast and easy as it is open source. Usually open source solutions are neither fast or easy but let’s take AMD's word for it. The solution is hardware agnostic and can run on AMD, Intel or Nvidia solution.

GPU Raytracing via Radeon Prorender has been natively integrated in Cinema 4D, nModo or as plugin to 3Dmax, Blender, Autodesk Maya, Solidworks and Rhino 6.

AMD demonstrated a 7nm GPU with 32GB HBM 2 with Cinema 4D R19 Rendering with Radeon ProRender running a motorcycle render demo. The demo looked good but it is hard to tell what would be the difference between running this on Vega 10 based Radeon Pro / Instinct card versus 7nm Vega 20 card. Of course 32GB HBM2 lets you use large data sets for textures and the like.