For those who came in late, GANs have been used for AI painting, superimposing celebrity faces on the bodies of porn stars and other cultural feats. They work by pitting two neural networks against each other to create realistic outputs based on what they are fed. Feed one lot of dog photos, and it can create new dogs; feed it lots of faces, and it can create new mugshots.

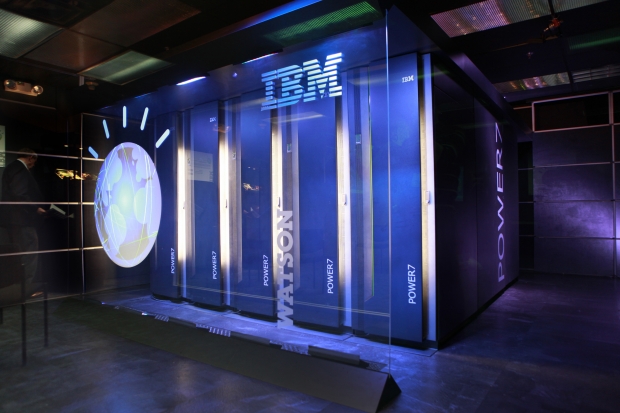

Researchers from the MIT-IBM Watson AI Lab realised GANs are also a powerful tool: because they paint what they’re “thinking”, they could give humans insight into how neural networks learn and reason. This has been something the broader research community has sought for a long time—and it’s become more important with our increasing reliance on algorithms.

David Bau, an MIT Ph.D. student who worked on the project, told MI,T Technology review that there was a chance for the team to learn what a network knows from trying to re-create the visual world.

So the researchers began probing a GAN’s learning mechanics by feeding it various photos of scenery—trees, grass, buildings, and sky. They wanted to see whether it would learn to organise the pixels into sensible groups without being explicitly told how.

Stunningly, over time, it did. By turning “on” and “off” various “neurons” and asking the GAN to paint what it thought, the researchers found distinct neuron clusters that had learned to represent a tree, for example. Other clusters represented grass, while still others represented walls or doors. In other words, it had managed to group tree pixels with tree pixels and door pixels with door pixels regardless of how these objects changed colour from photo to photo in the training set.

“These GANs are learning concepts very closely reminiscent of concepts that humans have given words to”, said Bau.

Not only that, but the GAN seemed to know what kind of door to paint depending on the type of wall pictured in an image. It would paint a Georgian-style door on a brick building with Georgian architecture, or a stone door on a Gothic building. It also refused to paint any doors on a piece of sky. Without being told, the GAN had somehow grasped certain unspoken truths about the world.

This was a big revelation for the research team. “There are certain aspects of common sense that are emerging”, said Bau. “It’s been unclear before now whether there was any way of learning this kind of thing [through deep learning].” That it is possible suggests that deep learning can get us closer to how our brains work than we previously thought—though that’s still nowhere near any form of human-level intelligence.

Other research groups have begun to find similar learning behaviours in networks handling other types of data, according to Bau. In language research, for example, people have found neuron clusters for plural words and gender pronouns.

Being able to identify which clusters correspond to which concepts make it possible to control the neural network’s output. Bau’s group can turn on just the tree neurons, for example, to make the GAN paint trees, or turn on only the door neurons to make it paint doors. Language networks, similarly, can be manipulated to change their output—say, to swap the gender of the pronouns while translating from one language to another. “We’re starting to enable the ability for a person to do interventions to cause different outputs,” Bau says.

“The problem with AI is that in asking it to do a task for you, you’re giving it an enormous amount of trust”, said Bau. “You give it your input, it does it’s ‘genius’ thinking, and it gives you some output. Even if you had a human expert who is super smart, that’s not how you’d want to work with them either.”