As the world grew worried about algorithmic bias, there were fears that Banjo’s AI algorithm might have been favouring white people. The Utah attorney general's office opened an investigation into matters of privacy, algorithmic bias, and discrimination.

However, an audit and report found no bias in the algorithm because there was no algorithm to assess in the first place.

Utah State Auditor John Dougall said that Banjo does not use techniques that meet the industry definition of artificial Intelligence.

Banjo indicated it had an agreement to gather data from Twitter, but there was no evidence of any Twitter data incorporated into Live Time.

So rather than opening one can of worms, the case opened another and demonstrates why government officials must evaluate claims made by companies vying for contracts and how failure to do so can cost taxpayers millions of dollars.

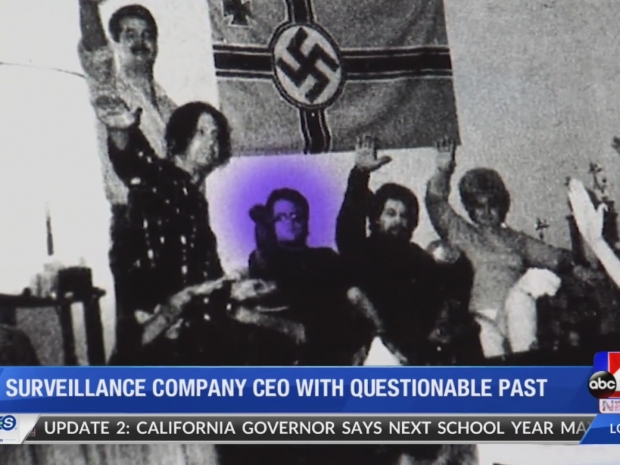

Companies selling surveillance software can make false claims about their technologies' capabilities or turn out to be charlatans or white supremacists -- constituting a public nuisance or worse.

The audit result also suggests a lack of scrutiny can undermine public trust in AI and the governments that deploy them.