One good example of artificial intelligence, actually, is politicians spouting about a subject they don't understand. It's certainly not intelligence.

Another fair example of artificial intelligence is the bore you meet in a pub or at a dinner party who drones on about Heidegger, Wittgenstein or indeed Einstein's Theory of Relativity when he – and it's usually a he – hasn't a clue what he's talking about. I'm not saying, by the way, that I have more than the vaguest notion about Wittgenstein, Einstein's Theory or Heidegger, so I'm not going to bore you by pretending that I do know.

Intelligence is a knotty topic that people have argued about for millenia, with some having the nous to even venture rational opinions on the subject.

As yet another press release plops into our already full inbox, we note that spin doctors all over the world feel it's necessary to plug the phrase “artificial intelligence” into their already hype-full vocabularies.

So now that rant is over, I'm going to have a stab at getting to the heart of AI, and I'm ready to admit that where politicians and public relation personnel have failed, I am exposing my Achilles' Heel to readers of Fudzilla.

I've worked for around 35 years in the technology industry – starting as a technical writer, doing some coding and then writing about it all as a technology journalist. Coding, of course is based on the if-then-or principle, and although it was possible to write software that could broadly be described as “artificial intelligence”, limitations on the hardware side meant it couldn't really do very much.

With increased processing power and better storage, computers are way way more powerful now than when I was writing code for the early X86 and other computers. What's more, unlike the human mind, computers can work at extremely high speeds and in parallel. Humans can't really think in parallel, although of course our bodies certainly do work in parallel – hearts beating, blood circulating, food digesting, neurons firing and the rest.

So artificial intelligence is really a superfast simulation of how some aspects of the human mind work, uncomplicated by other factors that come into our lives and affect our thinking, such as emotions, aches and pains and the vast amount of data flooding into our brains through the five senses. And computers can't day dream, nor sleep.

Heidegger, the German philosopher, said: “The most thought-provoking thing in our thought-provoking time is that we are still not thinking.” As philosophers and thinkers through the ages and from different cultures have struggled with the nature of intelligence, it's trite for the computer industry and for politicians to say that intelligence, even “artificial intelligence” is clear cut.

The move to AI in many fields such as accountancy, financial transactions and many other fields represents just the latest human development in labour saving devices – human clerks totting up figures manually were superseded by mechanical and then electronic calculators and horses were displaced by the invention of the internal combustion engine. AI is an example of a labour saving device. But programming a computer, however powerful, with the ability to cry, to laugh and to sleep is going to be very hard when we ourselves don't really understand why we do the things we do.

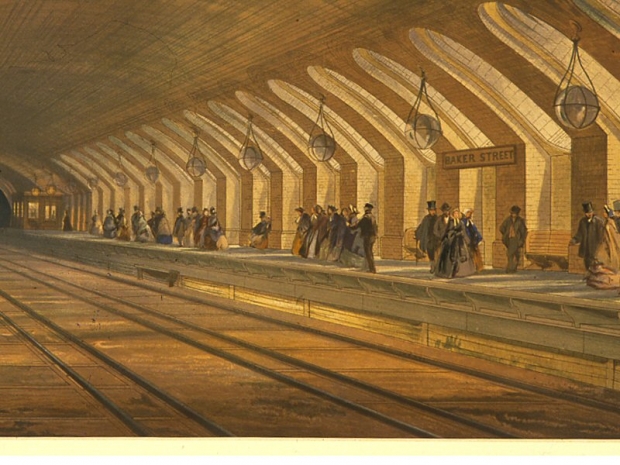

[Image of a steam powered Baker Street underground railway line in the Victorian era. Image © Tfl, London Transport Museum Collection]