Since the A100 Tensor GPU supports PCIe Gen 4 interface, it is not a big surprise that Nvidia went for the AMD platform, especially considering that EPYC 7742, based on Zen 2 Rome architecture, packs 64-cores and 128-threads per CPU, and the Nvidia DGX A100 has two of those CPUs.

The CPU in question works at 2.25GHz base and 3.4GHz Boost clocks, has 32MB of L2 and 256MB of L3 cache, and supports 4TB of DDR4-3200 memory with eight controllers. This socket SP3 server CPU from AMD has a TDP of 225W.

In addition to the two EPYC 7742 CPUs, the DGX A100 also packs 1TB of RAM, two 1.92TB of M.2 NVMe SSDs for OS and 15TB of internal storage from four U.2 NVMe (4x 3.84TB) drives, eight single-port Mellanox ConnectX-6VPI 200Gbps HDR InfiniBand adapters and single dual-port ConnectX-6 VPI ethernet adapter for storage and networking, all capable of delivering 200Gb/s.

Packed in a 6U form factor, the DGX A100 can push 5 petaFLOPs of AI and 10 petaOPS of INT8 compute performance with eight Nvidia A100 Tensor Core GPUs, six Nvidia NVSwitches, and 320GB of total VRAM.

The DGX A100 runs on Ubuntu Linux OS, weighs a stunning 123kg (271lbs), and uses a maximum of 6.5kW of power.

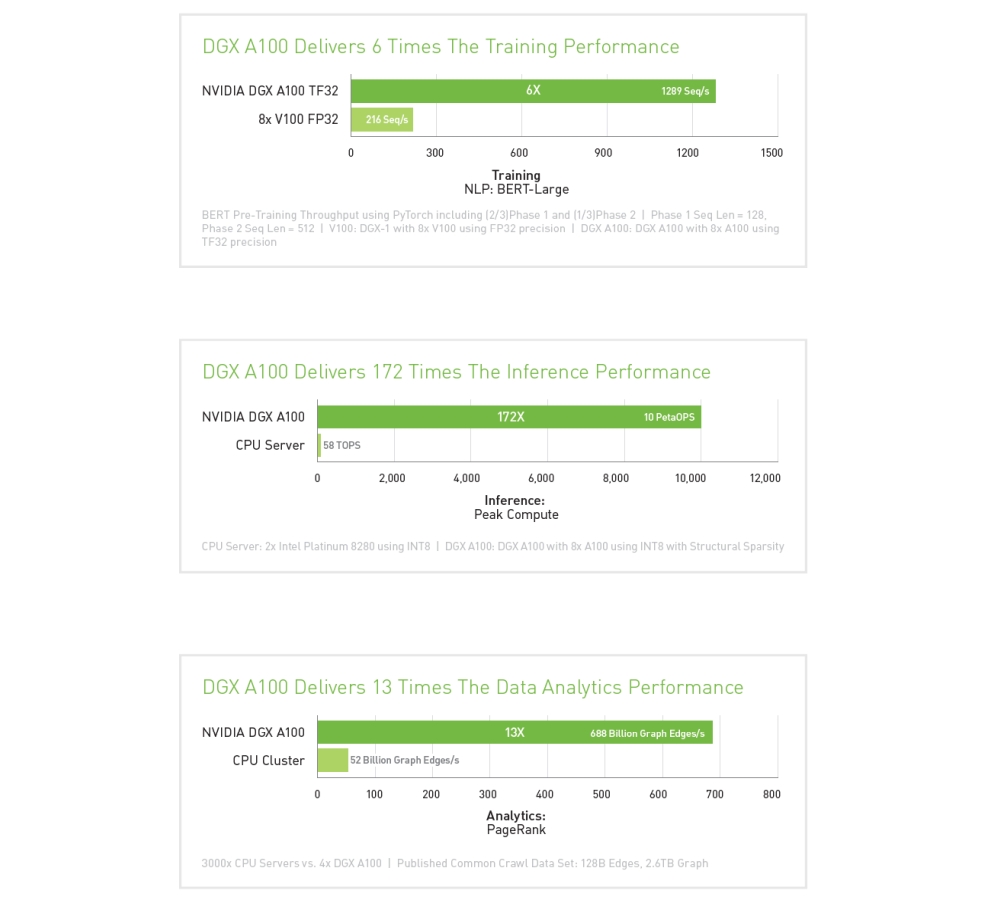

In terms of performance, single DGX A100 system delivers six times the training performance compared to a system with eight V100 GPUs, up to 172 times the inference performance compared to dual Intel Platinum 8280 server, and 13 times higher data analytics performance when four DGX A100 are put up to 3000x CPU servers.

As we wrote earlier, the DGX A100 should be immediately available and shipping worldwide, with the first customer being the U.S. Department of Energy’s (DOE) Argonne National Laboratory. It starts at $199,000, which is not a big surprise considering it packs some serious equipment.