Rob has worked for IBM for almost two decades, first as Fellow and Chief Architect for the Service Oriented Architecture (SOA) foundation. His current project is Watson, the company’s cognitive computing technology that aims to change the way machines interact with humans at the linguistic, emotional and semantic levels.

"Cognitive Computing" is like intelligence amplification for humans

If we were to put “cognitive computing” into a category, it would probably look more like “intelligence amplification” (IA) rather than Artificial Intelligence (AI). This is the idea that machine learning can amplify human knowledge, capabilities, and aid day-to-day procedural decisions.

IBM Watson, located at the company's research center in Yorktown Heights, New York (via Wikipedia)

“Watson processes information by understanding natural language, generates hypotheses based on evidence, and, because it becomes more capable and precise, Watson will help leaders and organizations make better, more confident decisions.”

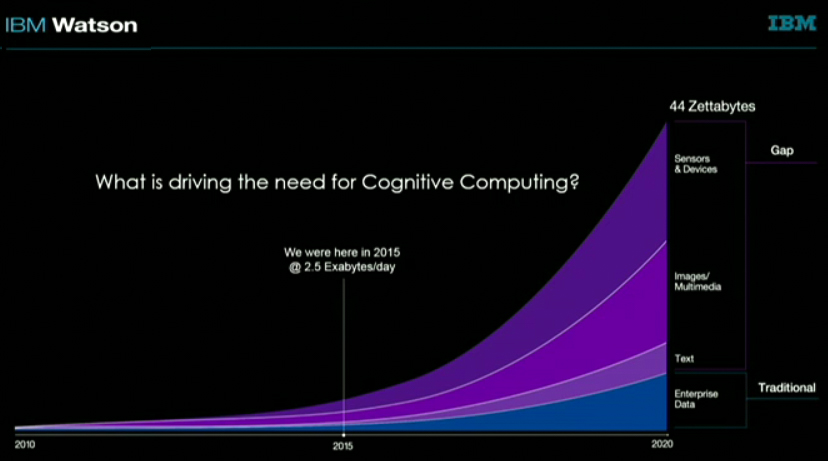

The world is producing excessive amounts of "unstructured data" that need to be reconstructed

To begin the keynote, Rob High started by pointing out that the world is rapidly accumulating large amounts of unstructured data every day that are not currently understandable in raw format. Since 2015, roughly 2.5 exabytes of data are being generated per day. That number is projected to reach a stunning 44 zettabytes of data per day by 2020.

Humans and machines are charted to produce 44 zettabytes of data per day by 2020

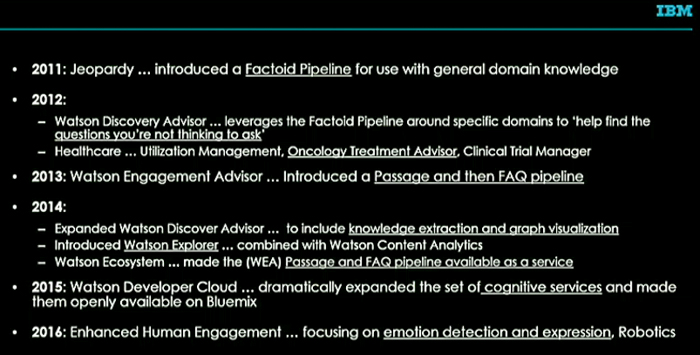

Five years ago, IBM’s Watson Discovery Advisor introduced a “factoid pipeline” that takes large datasets of general day-to-day knowledge and analyzes cultural references and emotional cues to better interact with ordinary people.

Image credit: NextPlatform.com

In 2013, the company then introduced a “passage then FAQ” pipeline. This one was more focused on engaging consumers, figuring out an individual’s life interests, and then aiding them with making better day-to-day procedural decisions. One year later, the advisor was improved to include graph visualization and knowledge extraction.

IBM’s Watson Discovery Advisor uses GPU-accelerated deep learning to do natural language processing (NLP), speech recognition, and computer vision to solve problems specific to particular industries. For example, the company has a cloud-based Speech to Text and Text to Speech translator that combines with a “Question and Answer” API to engage in a conversation with a human being.

In November 2015, IBM announced that it would be incorporating Nvidia Tesla K80 GPUs into its Watson natural language supercomputer. When coupled with IBM’s 12-core Power 8 RISC-based CPUs, the Tesla K80 GPU acceleration increases Watson’s processing power by 10 times its prior performance. With OpenPower system optimizations, IBM now has 40 times more inferencing throughput. Using GPUs, the company also has 8.5 times more throughput in training tasks. A server with two Power8 CPUs can now increase the number of documents Watson Concept Insights can concurrently index from seven to 60.

An example of a convolution neural network (via HardwareZone.com.sg)

Ultimately, the company wants to improve its machine learning techniques with deep learning. This can be accomplished through three tasks – modeling "high-level" data abstractions, using model architectures with multiple layers of non-linear transforms, and overcoming challenges of designing hand-crafted features for tasks.

IBM says Watson should perform more context-aware tasks for consumers including making transactions, drive users through step-by-step processes, navigate users through website, and provide product suggestions and decision support.

IBM Watson APIs as of 2016

Today, the company has 32 services available on the Watson Developer Cloud hosted on its Bluemix platform-as-a-service.

Going forward into 2016, the focus of the Watson team will be on understanding human personalities based on text. One of our favorite examples of this service is the Tone Analyzer, which uses mathematical probabilities to figure out the underlying emotions of a written paragraph or sentence. This is particularly useful for writing important emails, letters and stories to audiences where first impressions are critical. The utility is available to try as a free demonstration here.

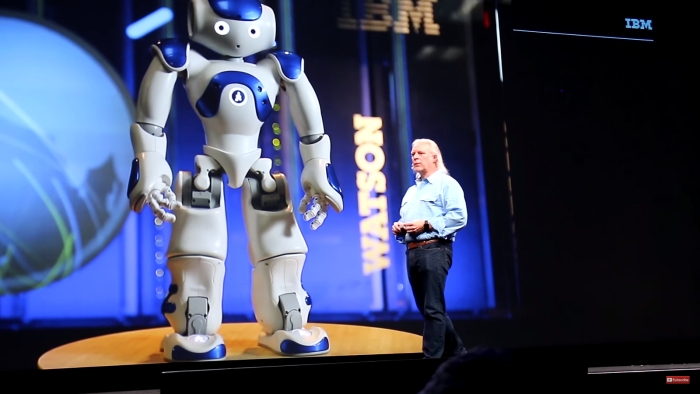

Self-learning robots powered by Watson

During the keynote, the IBM chief also introduced Connie, a self-learning "concierge robot" powered by the company's question-answering system with an ability to since and dance to a Taylor Swift song (we will leave out the video for your ears sake)

Image credit: HotHardware.com (via YouTube)

Of course, these advancements fall in line for the most part with Nvidia CEO Jen-Hsun Huang's keynote slide on the "expanding universe of modern AI." IBM's Watson is listed under "AI-as-a-service," and we expect its 32 services available in the Watson Developer Cloud will only grow in time as business professionals, individuals and scientists all begin to grasp the basic need to reconstruct much of the world's "unstructured data" into meaningful information.

“Coping with Humans”: A Support Group for Bots with Carrie Fisher