Nvidia apparently thought there is enough of a performance and price gap between the GTX 650 Ti and GTX 660, so it went ahead and developed the GTX 650 Ti Boost, which should plug the gap nicely. It is also worth noting that AMD introduced another mid-range card at about the same time, and that’s what it usually takes to get Nvidia’s tail wiggling.

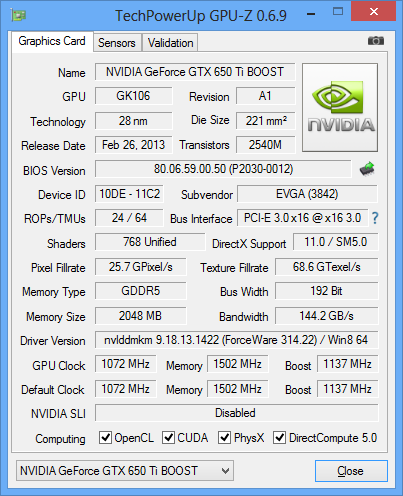

As the name suggests, the GTX 650 Ti Boost features Nvidia’s GPU Boost technology, which was not employed on the plain GTX 650 Ti. GPU Boost allows the card to boost GPU clocks depending on load and temperature.

A simple clock boost with no additional changes would not have ensured the new Boost card a significant performance edge over the GTX 650 Ti. Luckily Nvidia had a few other tricks up its sleeve, so it widened the 128-bit memory bus to 192 bits, greatly improving bandwidth on the new card. In fact, thanks to the new bus and speedier memory chips, the new card has 66 percent more bandwidth compared to the plain GTX 650 Ti.

The basic design of Nvidia’s GTX 650 Ti, GTX 650 Ti Boost and GTX 660 revolves around GK106 silicon. The GTX 650 Ti, GTX 650 Ti Boost feature 768 CUDA processors, while the GTX 660 has 960. One SMX module on the GTX 650 Ti and GTX 650 Ti Boost is disabled, so they end up with fewer cores. The GTX 650 Ti Boost features 64 texture units, while the GTX 660 has 80. However, the Boost card shares some features with the GTX 660 as well, namely the 192-bit memory bus and 24 ROPs. Both cards ship with 2GB of GDDR5 memory.

We didn’t have a chance to try out a reference card, but we got something a bit better instead, EVGA’s GTX 650 Ti Boost Superclocked. The Superclocked card features base GPU clock at 1072MHz, while GPU Boost ups the clock to 1137MHz.

As you can see from the table below, the reference clocked GTX 650 Ti Boost is quite a bit slower than EVGA’s Superlocked card. EVGA did not overlock the memory though.

| GTX 650 Ti Boost | |

| Base Clock Speed | 980MHz |

| Typical Boost Clock | 1033MHz |

| OC Boost | 1100MHz+ |

| CUDA Cores | 768 |

| SMX Units | 4 |

| Memory speed | 6008MHz |

| Memory Subset | 192-bit |

| Memory Controller | 3x64-bit |

| Memory Capacity | 2048MB GDDR5 |

| Typical Draw (non-TDP Apps) | 115W |

| Typical Draw (non-TDP Apps) with slider at 110% | 127W |

| Power Connectors | 1x 6-pin PCIe |

| Length | 9.5” |

| Display outputs | 2x dual-link DVIs |

| HDMI | |

| DisplayPort |

The EVGA GTX 650 Ti Boost Superclocked is supposed to deliver high visual quality in games, with in-game detail settings cranked all the way up to High. We’ll see whether it lives up to this promise in our benchmarks. All we can say so far is that it is looking good, at least on paper and in GPUZ.

Following the introduction of the GTX 650 Ti Boost, Nvidia’s complete Geforce 6xx lineup consists of the following cards.

Geforce GTX TITAN (GK110)

Geforce GTX 690 (2xGK104)

Geforce GTX 680 (GK104)

Geforce GTX 670 (GK104)

Geforce GTX 660 Ti (GK104)

Geforce GTX 660 (GK106)

Geforce GTX 650 Ti BOOST (GK106)

Geforce GTX 650 Ti (GK106)

Geforce GTX 650 (GK107)

Geforce GT 640 (GK107)

Geforce GT 630 (re-branded Geforce GT 440)

Geforce GT 620

Geforce GT 610

The Geforce GTX 650 Ti Boost graphics card delivers the usual feature set, but there is some new stuff as well, namely GPU Boost, which is lacking from the plain GTX 650 Ti. For a limited time only, gamers who purchase select GeForce GTX 650 Ti Boost graphics cards will receive $75 in-game for Hawken, World of Tanks, and Planetside 2 ($25 for each game).

The box is small and sturdy. You will get one molex to 6-pin power connector, DVI to VGA dongle, EVGA badge, user guide, quick start guide and driver CD.

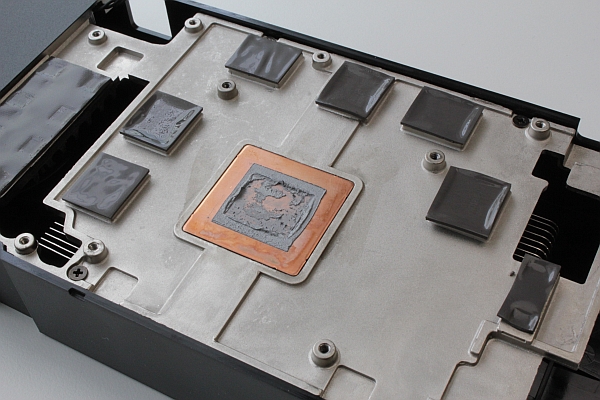

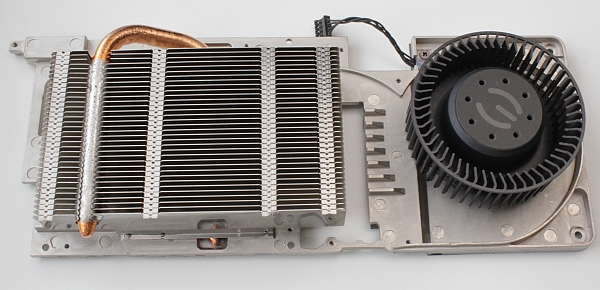

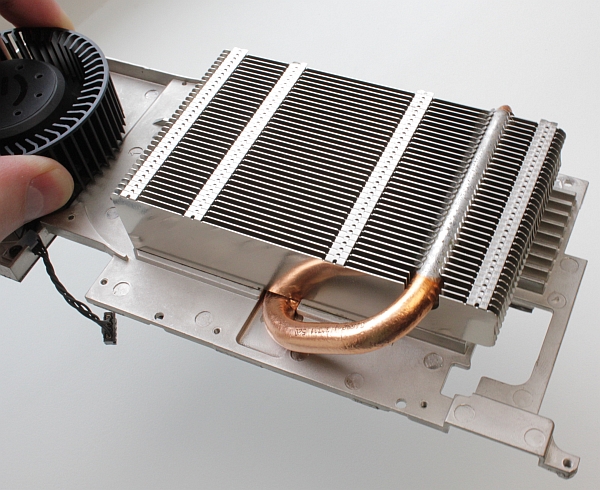

EVGA GTX 650 Ti Boost Superclocked uses dual-slot cooling that looks much like the GTX 660 Ti’s cooler. You have to look carefully to see differences between the coolers, especially if you want to compare it with the GTX 660 Superclocked. GTX 660 Superlocked and GTX 650 Ti Boost Superclocked feature a single 6-pin power connector. The cooler is a blower style affair and it’s two slots tall. No surprises here.

Nvidia limited the GTX 650 Ti Boost and GTX 660 to dual-card SLI, while faster cards in its lineup support triple SLI. In this price segment, this is a non-issue.

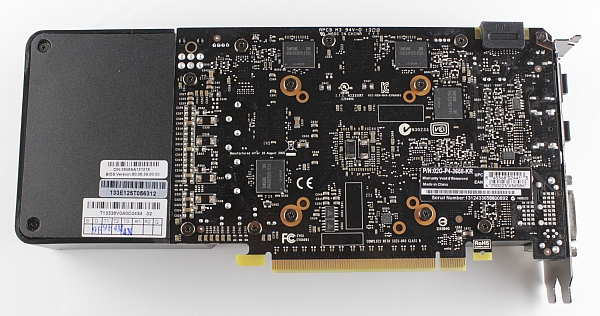

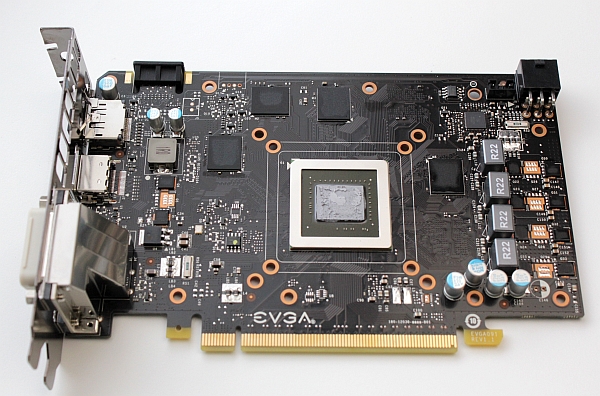

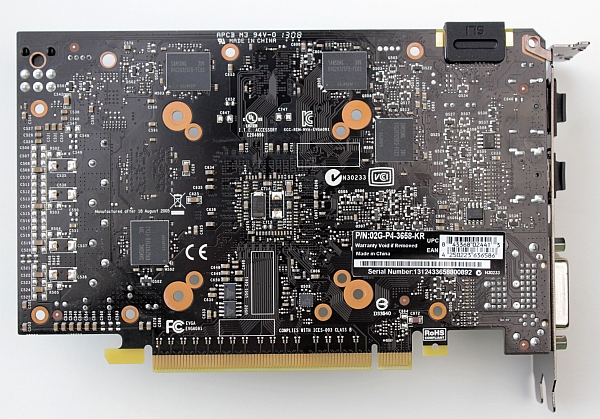

The standard GTX 650 Ti does not have an SLI connector or SLI support for that matter. The PCB is 17.3cm long while whole the card measures in at 24.3cm, which is clear once you look at the card from the back.

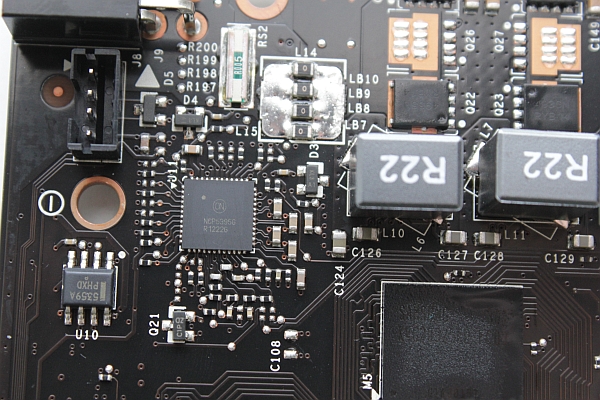

Nvidia did not put a lot of effort into reference heatsink design, so the heatsink only cools the GPU, leaving other low profile components exposed. EVGA’s card has a much better heatsink and cooler, borrowed from the GTX 660. The base clock on Boost Superclocked cards is higher than the reference clock, i.e. up from 980MHz to 1072MHz, but this didn’t prove too troublesome for the cooler.

The Samsung memory modules, K4G20325FD-FC03 are specified to run at 1500MHz (6000MHz GDDR5 effectively). EVGA’s GTX 650 Ti Boost Superclocked packs a total of 2GB of GDDR5, in eight memory modules. Four of the modules are placed on the back. The 192-bit memory bus, coupled with 6008MHz GDDR5, ensures plenty of bandwidth for the GPU. Additional memory would be overkill, since the GPU would give in to eye candy before the card runs out of memory.

The GTX 650 Ti BOOST has a single SLI connector, which is usual for this price segment. So, it’s possible to boost performance and use two cards in SLI mode, but not more than that. Video outs include two dual-link DVIs (only one is VGA capable), standard HDMI and standard DisplayPort connector. Note that all four outputs can be used simultaneously.

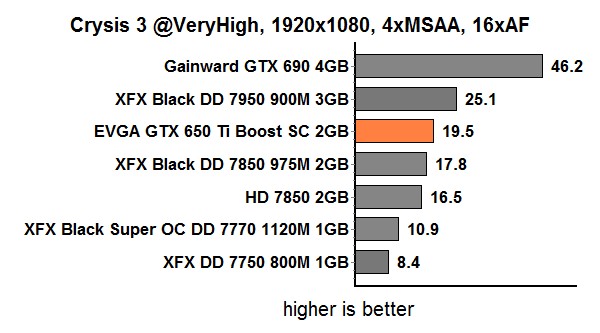

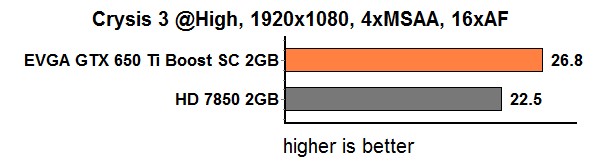

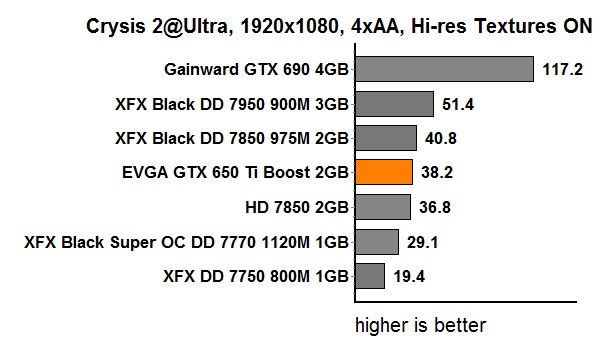

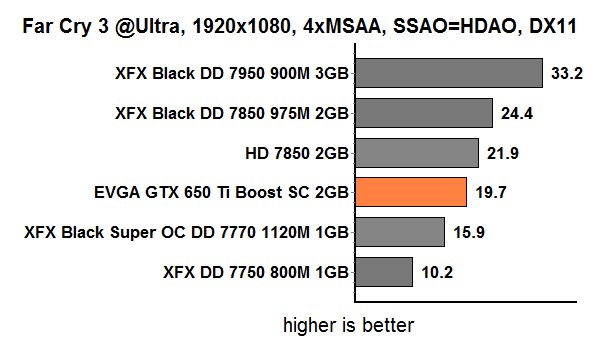

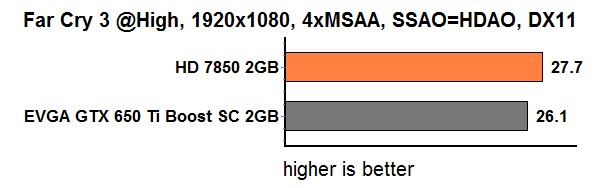

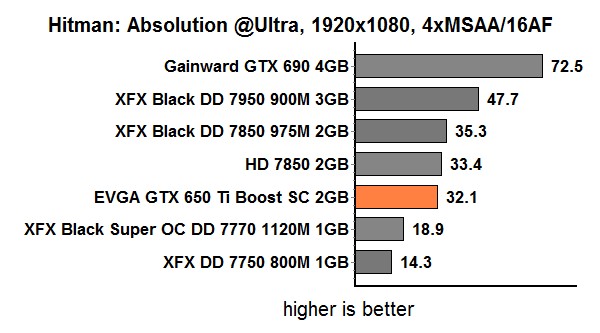

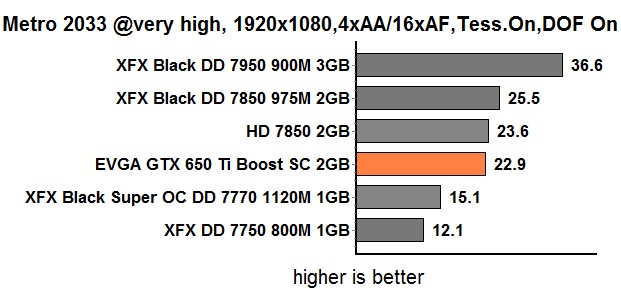

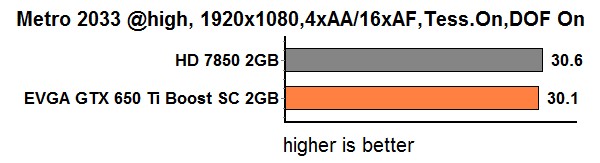

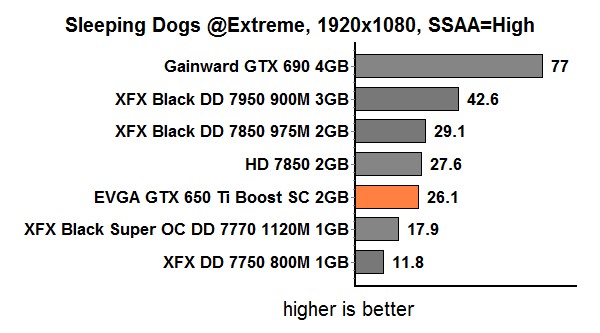

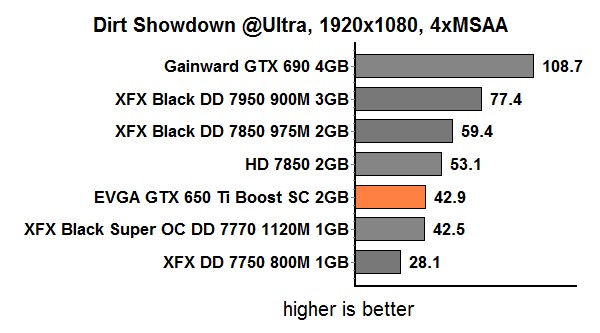

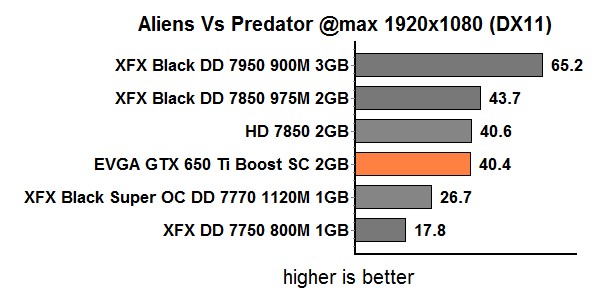

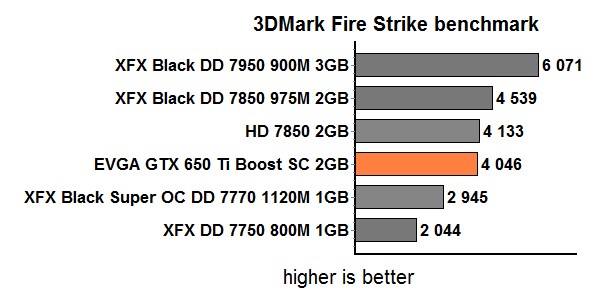

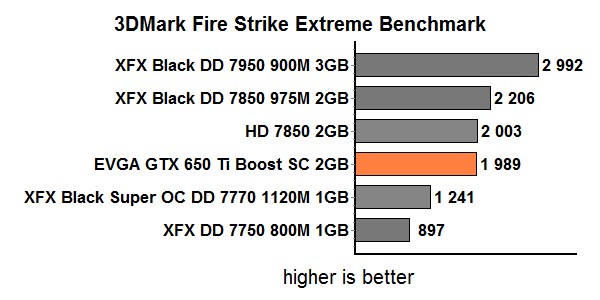

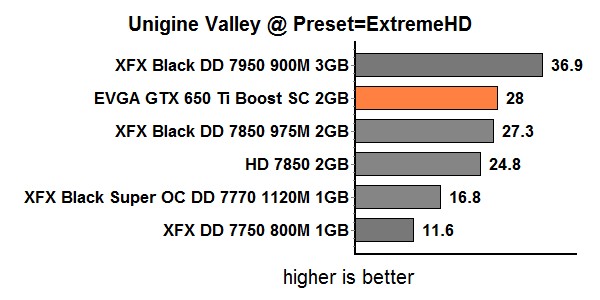

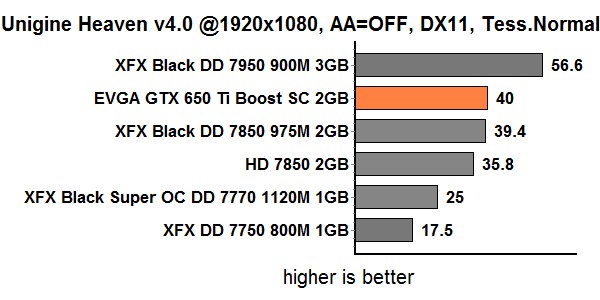

As usual, we tested all the games at maxed out detail settings. However, in games that prove unplayable at such settings in 1920x1080, we reduced the detail settings to High. The GTX 650 Ti Boost is supposed to hit the sweet spot for gamers on a budget, who still don’t want to miss out on 1080p gaming.

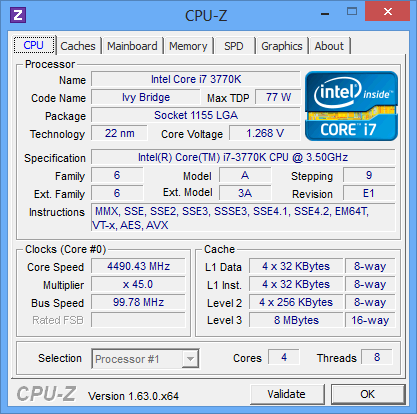

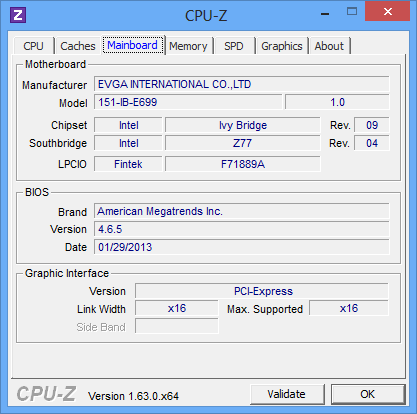

Testbed:

- Motherboard: EVGA Z77 FTW

- CPU: Ivy Bridge Core i7 3770 (4.5GHz)

- CPU Cooler: Gelid The Black Edition

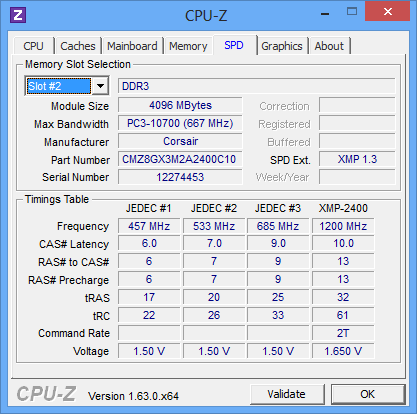

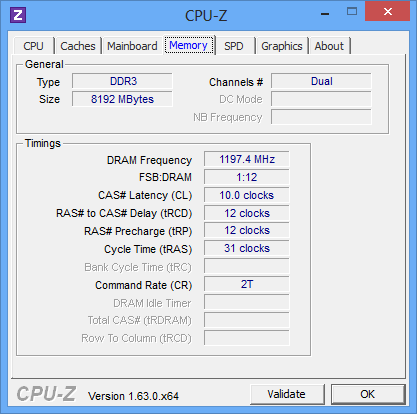

- Memory: 8GB Corsair DDR3 2400MHz

- Harddisk: Corsair Neutron GTX 240GB

- Power Supply: CoolerMaster Silent Pro 1000W

- Case: CoolerMaster Cosmos II Ultra Tower

- Operating System: Win8 64-bit

Drivers:

- Nvidia 314.22-whql

- AMD 13.3_Beta3

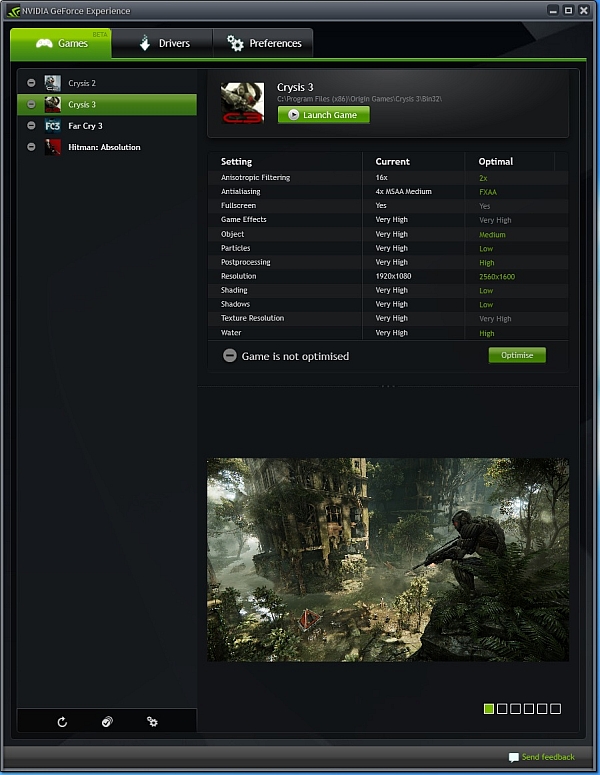

Geforce Experience is a new application from Nvidia that optimizes your PC in two key ways. First, Geforce Experience automatically notifies you of new Nvidia drivers and downloads them for you. Second, Geforce Experience optimizes graphics settings in your games based on your hardware configuration. Nvidia performs extensive game testing for various combinations of GPUs, CPUs, and monitor resolutions and stores this information in the Nvidia cloud. Geforce Experience connects to the Nvidia Cloud and downloads optimized game settings tailored specifically to your PC. The result is that your PC is always kept up to date and optimized for the latest games. Geforce Experience optimal settings are currently based on single GPU setups.

However, we doubt that anyone willing to invest in an SLI gaming rig will need Geforce Experience to begin with, as it target audience are not enthusiasts.

Following installation, you will be asked whether you want Geforce Experience to scan your system and find games suitable for your hardware. Only games that Geforce Experience has optimal settings for and are found on your computer will show up in the games list. Geforce Experience provides optimal settings for these games. Check to make sure your game is supported.

In case one of your games should be supported by Geforce Experience, but it is not on the list, it is probably because the software uses locations specified in the Preferences —> Games tab to find games. Add the location where your game is installed and you’ll be good to go.

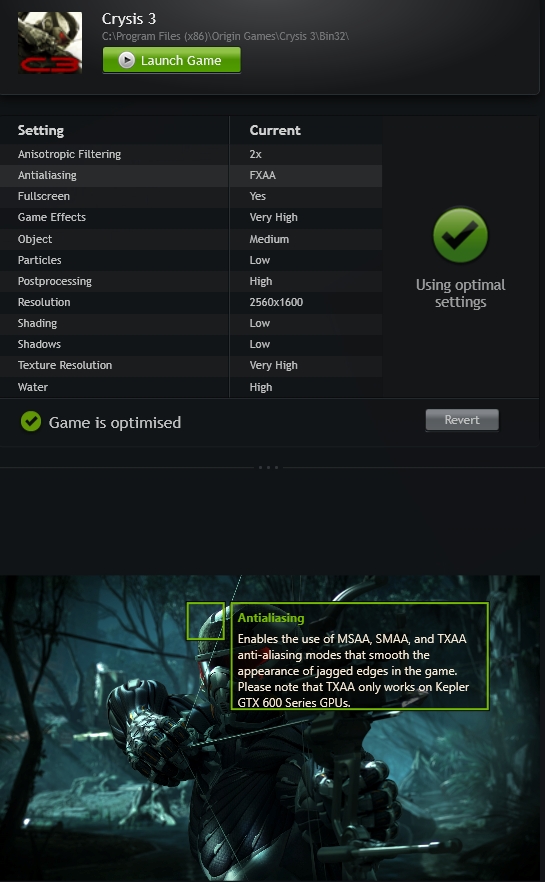

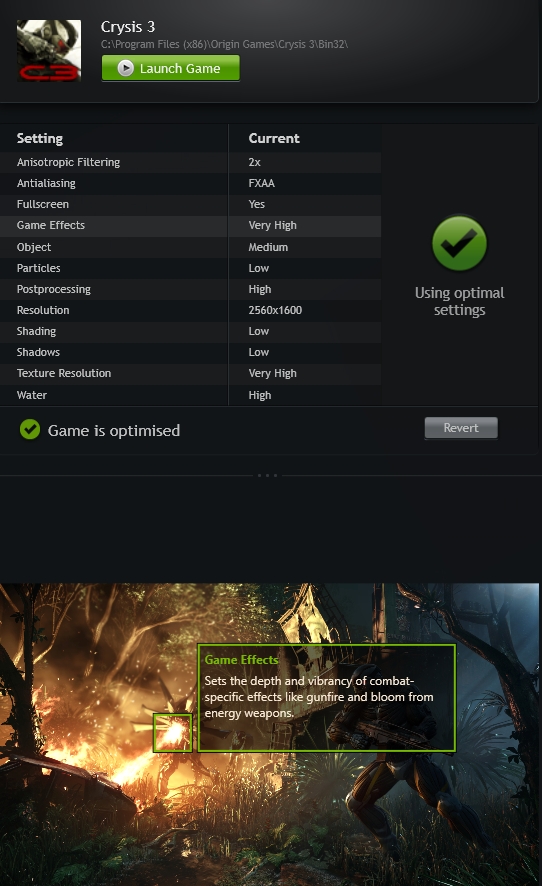

GeForce Experience targets 40 FPS (average) for its optimal settings. For example, we set Crysis 3 to Very High detail levels, but using these settings we got about 19 FPS. Geforce Experience realized that the settings are not optimized for our hardware and suggested to take care of it. We accepted the kind offer, but we were rather amused when Geforce Experience suggested we increase the resolution to 2560x1600. Needless to say, the higher resolution was supported by our monitor. We decided to give it a go anyway and Geforce Experience really managed to optimize in-game settings and hit a 35 FPS average in 2560x1600.

For users who don’t know what Geforce Experience does in the background there is a simple display of examples, which should guide users through the process. As usual,it is a game of trade-offs, balancing image quality against resolution and

performance.

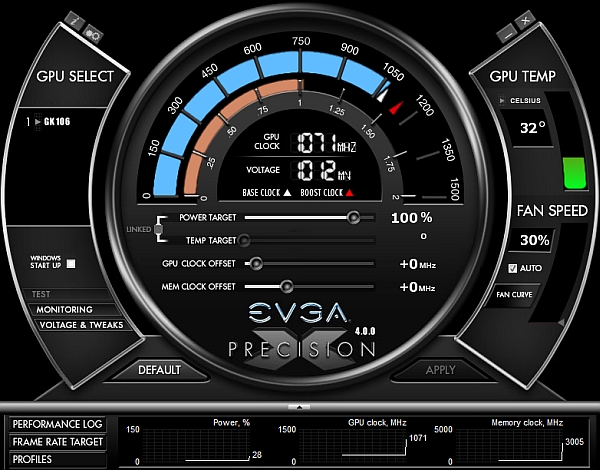

EVGA has a habit of rolling out new versions of its popular overclocking tool along with its new graphics cards, allowing consumers to get full support from launch day.

Key Features:

- GPU and Memory Frequency/Clock Offset

- Power Target Control (GeForce GTX Titan / 600)

- Temperature Target Control (GeForce GTX Titan)

- Pixel Clock Overclocking – OC your refresh rate!

- Frame Rate Target Control

- GPU Voltage Adjustment + Overvoltage (GeForce GTX Titan)

- Custom Fan Control/Fan Curve

- Profiling system allowing up to 10 profiles with hotkey

- Robust monitoring allowing ingame, system tray and/or Logitech LCD monitoring

- In game screenshot hotkey, supports BMP, PNG and JPG formats

- Custom skins including ones created by the EVGA community!

- Support for wireless Bluetooth overclocking via custom Android app

- Multi-language support: English, Dutch, French, Traditional Chinese, Japanese, Korean, Polish, Russian, Spanish, Portuguese

The software even supports non-EVGA cards and we suggest you give it a try, since it tends to offer a bit more than classic overclocking tools. You can learn more about it here, in EVGA’s Precision X FAQ. We managed to increase the GPU clock by 90MHz, while the memory clock easily went up by 200MHz (effectively 800MHz).

EVGA GTX 650 Ti Boost Superclocked is completely silent while idling. While gaming, the fan can be audible, but it is quiet enough not to be considered a distraction. On the whole we were pleased, and the good temperature/noise ratio was attained thanks to EVGA’s custom cooler. As we can see from the screenshots, the temperatures were kept at bay, even with an overclocked GPU.

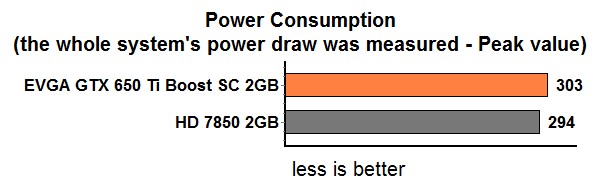

In terms of power consumption, the GTX 650 Ti Boost does rather well. The XFX Radeon HD 7850, which offers similar performance, consumes a bit less power than EVGA’s factory overlocked card.

The typical power draw for the reference card in so-called non-TDP apps is 115W. Nvidia claims the TDP is 140W. If we take the power slider to 110 percent, as is recommended for overclocking, we hit 127W in non-TDP apps. EVGA’s GTX 650 Ti Boost Superclocked uses slightly, more power than the reference card. Since it is powered through the PCIe slot and 6-pin power connector, it could theoretically draw up to 150W, so there is more than enough headroom. In any case GPU Boost should keep power consumption in check and throttle the clocks accordingly. We measured system consumption, without the monitor, of course.

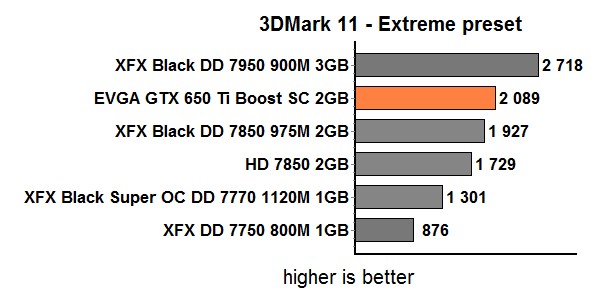

Nvidia already had two GTX 650 cards before it decided to roll out the Boost edition. Although the GTX 650 and GTX 650 Ti share the same DNA, they can’t keep up with demanding titles in 1080p. The GTX 650 Ti Boost proves Nvidia was right when it claimed it could deliver a mid-range card capable of comfortable 1080p gaming.

Nvidia originally claimed that the new card should outpace AMD’s Radeon HD 7850 1GB, but it turns out it is a good match for the Radeon HD 7850 2GB, both in terms of price and performance. At the moment you can pick up either of them for about €160. As far as performance goes, the differences are relatively small and the choice really comes down to which games you prefer more than anything else. It is also worth noting that AMD has EOLed HD 7850 1GB cards, but plenty of them will be in stock for a while longer. We are still not sure whether AMD will decide to drop the price of its HD 7850 2GB to counter Nvidia’s new card, but it is something worth considering if you are in the market for a mid-range upgrade.

EVGA’s GTX 650 Ti Boost Superclocked card costs about 10 euro more than the reference card, but it also offers a bit more performance and a much better cooler. It is a sound investment in our book.