Nvidia has a mid-life kicker. It is called Geforce GTX 580 and the card as we said before is based on a “new” improved GF100 chip that now bears the GF110 moniker. In reality is a GF100 done right, with some optimisation on the transistor level and the card itself has a new vapour chamber cooler that is really quiet, at least for a high-end card.

Does it really deserve the Geforce GTX 580 name? Well, continue reading and decide for yourself.

The card works at 772MHz while the shaders run at twice that clock, or 1544MHz. The card has 1.5GB of GDDR5 memory clocked to 2000MHz and paired up with a 384-bit bus. It is the same size as Geforce GTX 480 and it measures 10.5 inches or 26.67 centimeters in length. It has one 6-pin and 8-pin power connector and 244W TDP. The TDP is somewhat lower as the chip was slightly tweaked and the board has better power management. The TDP is also better as Nvidia redesigned the chip and introduced a new cooling solution to reference high end graphics cooling, the vapour chamber. It's worth noting that vapour chamber has been around for a couple of years, but it was usually reserved for non-reference boards. This is the first time we see it as part of the reference design.

The TDP is some 50W lower than on the old Geforce GTX 480. Nvidia didn’t talk about size of the core in square milimeters, but it should be very close to the original GF 100 core, so around 500mm2 sounds about right.

We can only cut the card in half and measure it, but since we have only one sample, we probably won't do it.

The transistor count is around 3 billion. Once again Nvidia doesn’t talk about the real number, probably in order to hide some things, like the fact that the chip has 512 shaders and all eight clusters turned on, the same number as it was possible on a fully enabled GF100 chip.

Nvidia took care of leakage on the transistor level, something that was responsible for many performance and temperature related issues and managed to gain some performance by increasing the clock. Nvidia also talked about the fact that you should see this new chip as a merger of some architectural advantages of the GF104 and GF100, and this was the formula that resulted in the GF110.

The video engine didn’t change, it is the same as on GF100, Fermi chips. You still have maximum of two display supported, no DisplyPort, at least not on reference boards. The reference card has two DVI ports and a mini HDMI. The mini HDMI cable might be bundled by partners and if not it will cost you much more than a standard full size HDMI that you use for your HD TV. Let's hope that Nvidia can control this otherwise we see little use of mini HDMI.

Nvidia couldn’t put the full size HDMI port as it didn’t have enough space on back of the part. One slot is reserved for the connectors, while other one is used as a blow out metal grille that is very important for Nvidia's new cooler. You could use a mini HDMI dongle to get a proper HDMI port, but the other downside for some users will be the fact that Nvidia only supports HDMI 1.3 and not later standards. Clearly consumers deserve to get HDMI and DisplayPort on any high-end card and we're not trhilled by the lack of the latter.

The video engine will most likely change on the other 500 series cards such as the GTX 560 but this is something that we need to wait and see. For this chip it was too risky to meddle with that as changing more parts of the chips on a new design that simply has to succeed is very dangerous, especially when you report to Jen Hsun Huang.

There are two more things related to the card itself, something that we haven't seen before. First is the new power current regulation with two circuits and these circuits regulate the speed of the fan much more preciously than before. In previous designs the GPU temperature was the key element in fan speed regulation and with this innovation Nvidia can get smoother fan control.

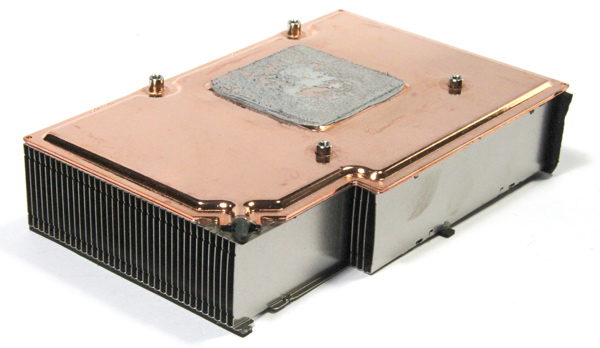

This also helps the vapour chamber cooler, one of the cool features of this new card. The principle is simple, there is a chamber full of a water-like liquid that evaporates and transfers the temperature from the GPU. Once the gas cools, it drops down to the GPU again and starts all over. It's basic physics, but it works.

Heatpipes are gone and vapour chamber looks like a more efficient way to cool the card. As a result of this the metal plate is gone and the cards temperature dropped from an average 94 degrees Celsius on the GTX 480 to 84 Celsius with GTX 580. You can now touch the card and not get burned, which is a great improvement over the GTX 480.

Since the card doesn’t have metal plate it is lighter and won't easily break the PCIe slot in transport, but of course this dual-slot card is still quite heavy.

We have been waiting for a long time to see technologies similar to water cooling and vapour chamber in GPU cooling. Basically GPU development has come to the point that air coolers and heatpipes are not simply enough, they simply needed more performance.

For the GTX 580, Nvidia decided to use the new vapour chamber cooling. This is not much of a surprise as the GTX 480’s cooler has taken enough flak for Nvidia to realize that they have to raise their game in this field.

However, vapour-chamber technology is anything but a new technology, and as far as we recall it was Sapphire who first used it to cool GPUs. Thanks to this tech, Nvidia can get rid of the heatpipe solutions such as the one on GTX 480 (picture below). As you probably already know, the GTX 480 is a pretty hot and loud card, but the GTX 580 is a different beast. It is, in fact, surprisingly quiet for a high-end card.

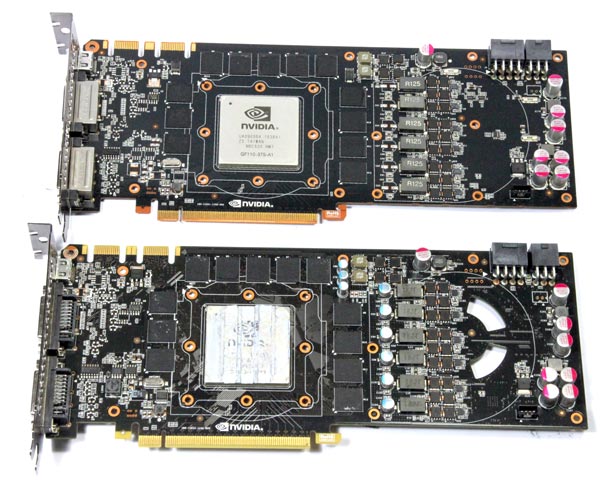

The GTX 580’s PCB design hasn’t changed much from the GTX 480, but there have been improvements in power regulation circuitry (picture below). Nvidia added Advanced Power Management, a feature which monitors consumption and performs power capping – all to protect the graphics cards from excessive power draw.

The GTX 480 has a hole in the PCB below the fan, so that the fan could draw fresh air in scenarios where another card in SLI mode blocks the fan. The GTX 580 has no holes in the PCB or the cooler’s metal block.

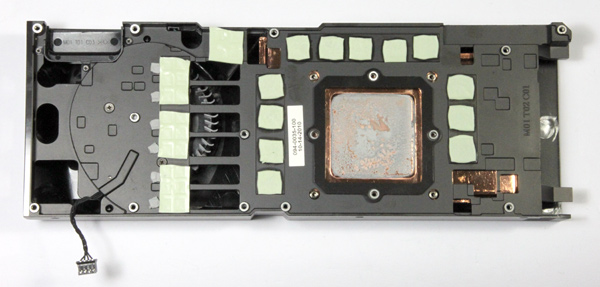

The memory modules are in contact with the cooler’s metal block whereas the GPU is in direct contact with the vapour-chamber (picture below).

One of the positive aspects of vapour chamber technology is that it doesn’t take up much space, while at the same time it renders heatpipe solutions obsolete.

The GTX 580’s vapour chamber is the largest one we’ve seen so far. The heatsink is on top of the chamber, which allows for quick heat transfer from the GPU and further dissipation via the heatsink fins.

The GF110 GPU takes up the same amount of space as the GTX 480’s GF100.

Since the cards’ PCBs are pretty similar, we thought it would be nice to try whether GTX 580’s cooler will fit onto a GTX 480. Unfortunately, a few capacitors in the power regulation area wouldn’t allow for our GTX 480 makeover.

GTX 580 comes with two dual-link DVI connectors and one mini-HDMI.

The card is powered via one 6-pin and one 8-pin power connector. The picture below compares the GTX 480 and GTX 580.

Testbed:

Motherboard: EVGA 4xSLI

CPU: Core i7 965 XE (Intel EIST and Vdrop enabled)

Memory: 6GB Corsair Dominator 12800 7-7-7-24

Harddisk: OCZ Vertex 2 100 GB

Power Supply: CoolerMaster Silent Pro Gold 800W

Case: CoolerMaster HAF X

Fan Controler: Kaze Master Pro 5.25"

Operating System: Win7 64-bit

262.99_desktop_win7_winvista_64bit_english

10.10 CCC

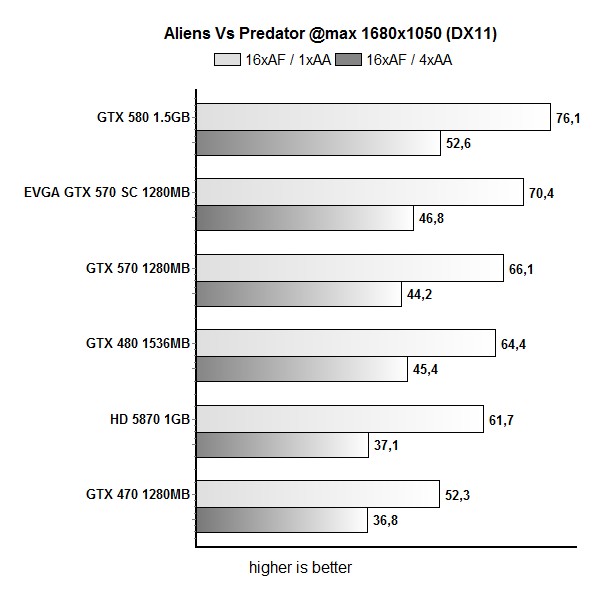

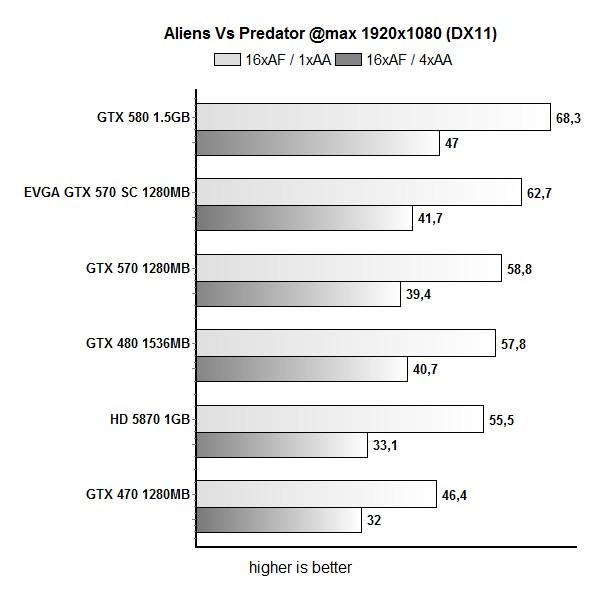

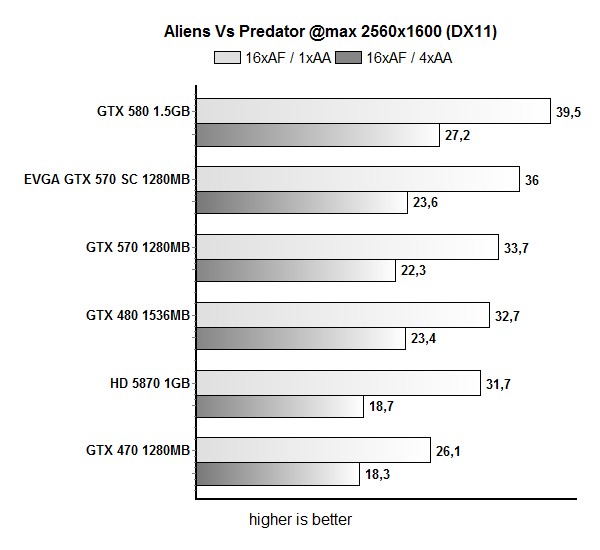

Aliens vs Predator

GTX 580 outruns the GTX 480 by up to 20% in AvP, which is similar to the results in other tested games. The difference is about 3-4% lower at 2560x1600 than at 1929x1080.

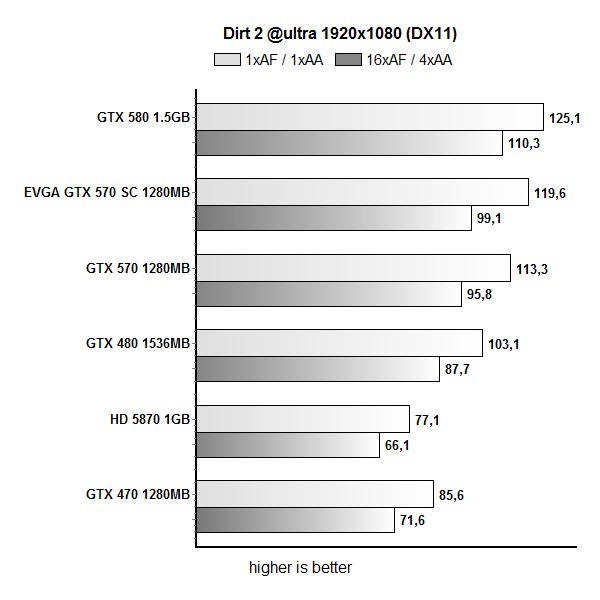

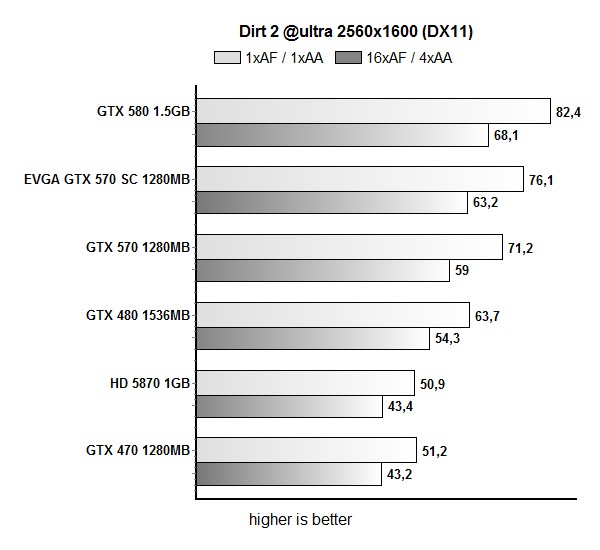

Dirt2

Dirt 2 rates the GTX 580 way above the GTX 480 as it beats it by 25.9%.

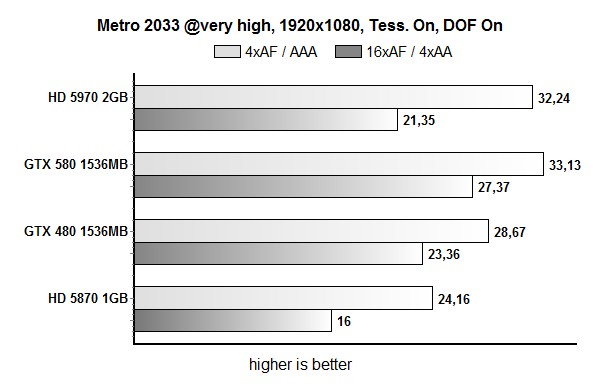

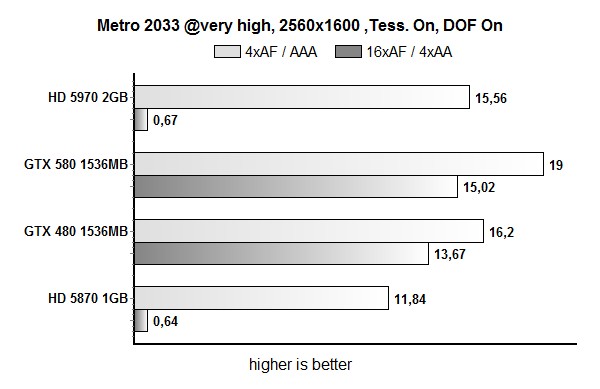

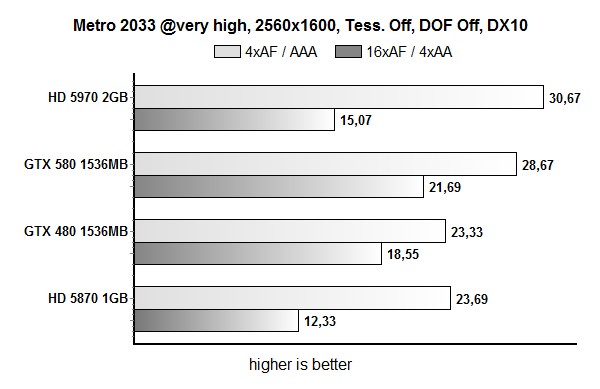

Metro 2033 – DX10 & DX11

In Metro 2033 we performed DirectX 11 as well as DirectX 10 tests. In DX11 tests the GTX 580 outperformed the GTX 480 by 17%, whereas DX10 testing increases this advantage to 23.9%.

In this gae we had some issue with Radeon cards in tests with antialiasing.

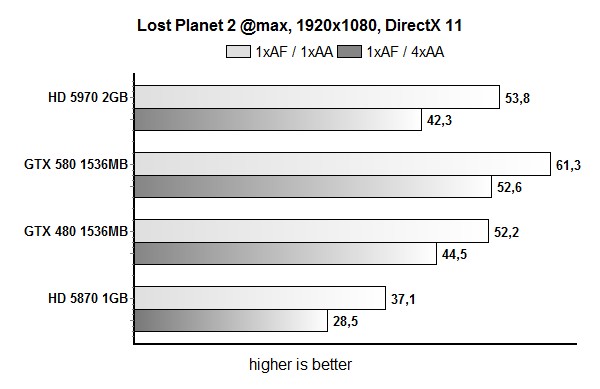

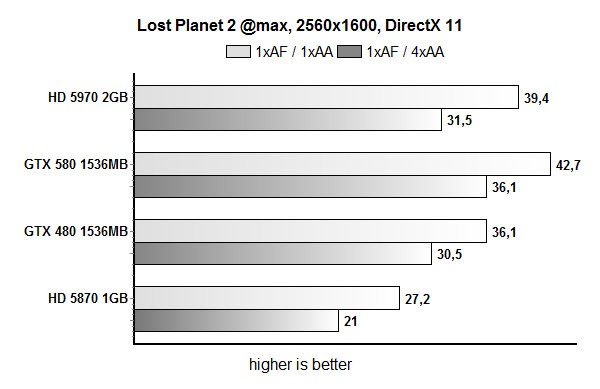

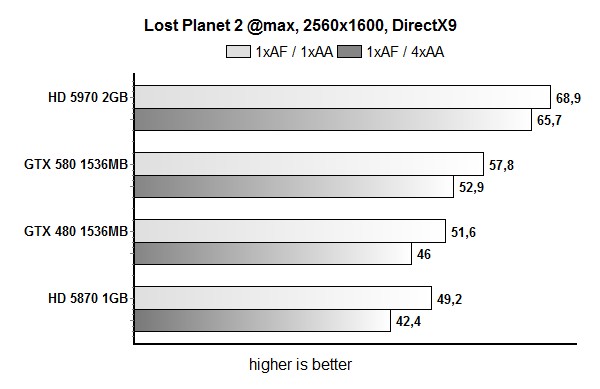

Lost Planet 2

Lost Planet 2 reports the GTX 580 to be in a constant advantage over the GTX 480 by about 18%, regardless of the resolution and in-game settings.

3DMark Vantage

We’ve been hearing about the GTX 580 performing 30 percent better than the GTX 480, but we now know that these figures are not actual gaming results but rather 3DMark Vantage scores.

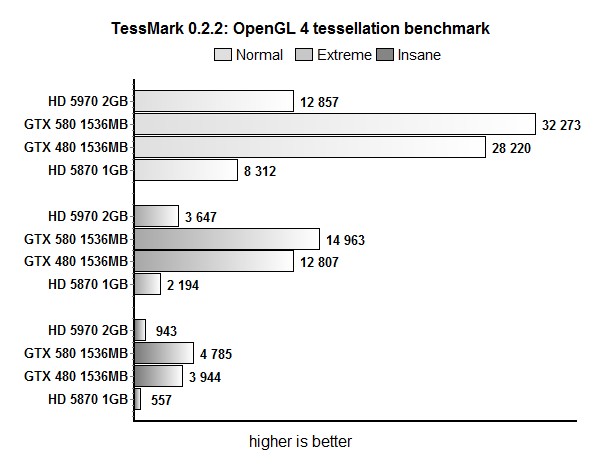

TessMark

TessMark shows that tessellation is one of the main advantages of Nvidia's Fermi architecture over AMD boards. With each level of tessellation, the GTX 580 widens the performance gap between it and GTX 480 – it starts at 14% in the least demanding test and goes up to 21% in the most demanding tests. However, tessellation is still not implemented in numerous games and it's hardly a major selling point, but it does help make the GTX 580 a bit more future proof.

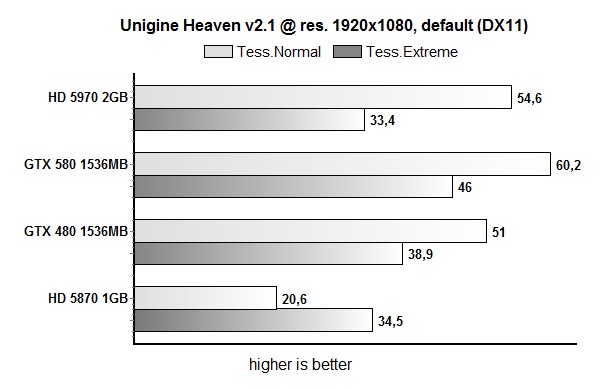

Unigine Heaven

The Unigine Heaven test, which we used to test the card’s tessellation capabilities, shows that the GTX 580 is 18% faster than the GTX 480, with the new cards GPU running 9% faster than GTX 480’s one.

Overclocking, Consumption and Thermals

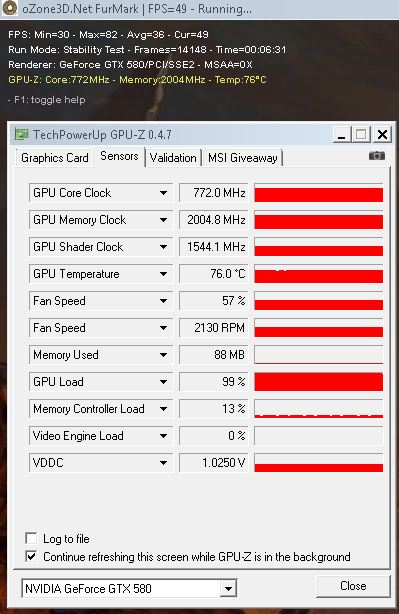

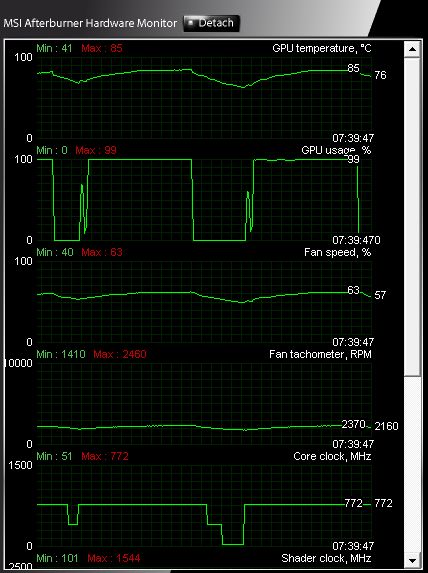

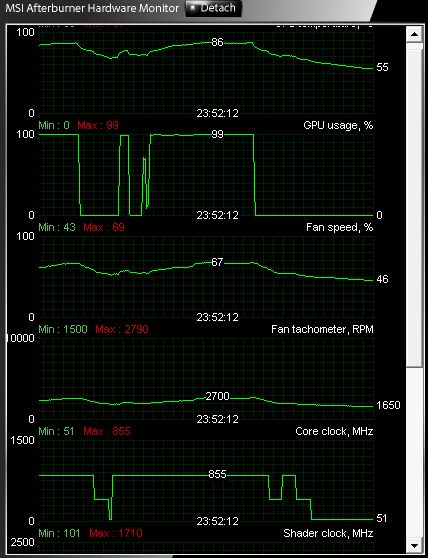

As our results readily confirm – GTX 580 packs plenty of OC potential. We managed to hit 855MHz for the GPU and 1190MHz (4760MHz effectively), all without meddling with voltages or changing the fan speed from AUTO mode. Reference clocks for this card are 772MHz for the GPU and 4008MHz for the memory. We heard that most GTX 580 can be clocked up to 890MHz by adding voltage but we will check this later on.

The fan isn’t too loud during intensive gaming and we can finally say that we’re pleased with the noise levels. Speeding up the fan didn’t significantly contribute to overclocking headroom. MSI’s Afterburner v.2.0 allowed us to set the fan at maximum 85% RPM, where we were able to push the GPU to 860MHz. MSI’s Afterburner beta does support changing voltages and we should play with it later.

Nvidia uses a new technology dubbed Advanced Power Management on the GTX 580. It is used for monitoring power consumption and performing power capping in order to protect the card from excessive power draw.

Dedicated hardware circuity on the GTX 580 graphics card performs real time monitoring of current and voltage. The graphics driver monitors the power levels and will dynamically adjust performance in certain stress appllications such as FurMark and OCCT if power levels axceed the cards spec.

Power monitoring adjust performance only if power specs are exceeded and if the application is one of the apps Nvidia has defined in their driver to monitor such as FurMark and OCCT. This should not significantly affect gaming performance, and Nvidia indeed claims that no game so far has managed to wake this mechanism from its slumber. For now, it is not possible to turn off power capping.

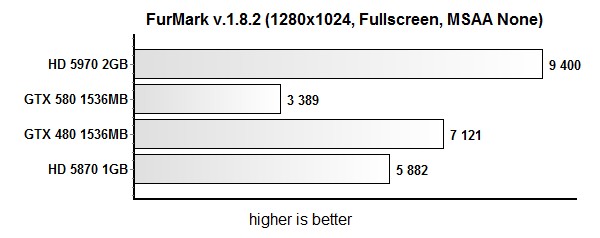

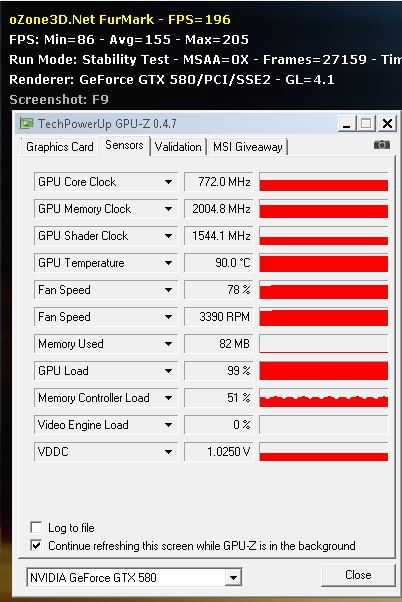

GTX 580’s power caps are set close to PCI Express spec for each 12V rail (6-pin, 8-pin, and PCI Express). Once power-capping goes active, chip clocks go down by 50%. It seems that this is the reason why GTX 480 scores pretty bad compared to GTX 480 in FurMark.

FurMark temperatures didn’t go over 76 °C, which isn’t very realistic – in gaming tests we measured up to 85°C, which seems to suggest that Nvidia overdid the preventive measures.

GPUZ 0.4.7 doesn’t show downclocking during FurMark, but the new version is set to change that. Below you see the GPU temperature graph we captured during Aliens vs. Predator tests.

After overclocking, temperatures were at the same level as before. The fan was a bit louder, but still not too loud.

The older FurMark, version 1.6, shows that GPU can hit 90°C.

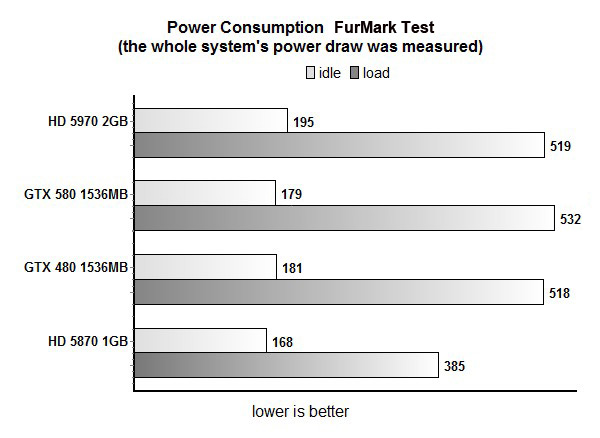

Consumption is on par with the GTX 480. You’ll find older FurMark test resulst below, because in new FurMark tests our rig didn’t consume more than 367W. During gaming we measured rig consumption of about 450W.

Conclusion

Nvidia has improved a lot of things with this so-called new chip. The Geforce GTX 580 is the fastest single GPU card that we have tested so far. The cooler is improved compared to GTX 480; the card is definitely not as hot as before and you can see that average gaming improvement over the GTX 480 is some 20 percent. It can jump to 30 percent but only in a few specific usage scenarios.

Tessellation runs fine, but the lack of games with proper support is really spoiling the fun. However, make no mistake - this card is definitely a great solution when it comes to tessellation. AMD's Cayman is the riddle that we still haven’t solved and this is the only ATI chip that might counter this DirectX 11 single GPU in the high end.

Still, bear in mind that dual GF110 based cards should come out and be even faster. Also, the Antilles, a.k.a. Radeon HD 6990 dual cards should also end up faster. Both of them are scheduled for launch this year and traditionally should cost at least €100 or $100 more than single GPU cards. Unfortunately, pricing is something you can never claim with certainty. Of course, two chips of that magnitude will end up very hot.

With an average selling price of €439 and listings going all the way to €499, we cannot say it’s really a bargain. The US version is listed for $559.99 which sounds like a lot.

It’s well worth noting that the card ends up faster than even a dual chip Radeon HD 5970 card, but it also loses in at least a few tests especially at 2560x1600 and some DirectX 11 games. It obviously leaves the Radeon HD 5870 in the dust, and there’s no doubt it’s a much faster card.

Power consumption is slightly better than GTX 480 but it is still pretty high. It is not as hot as the GTX 480 and it’s also quieter, which can’t be ignored.

Overall the card is the fastest single GPU card available. This will be the case until Radeon HD 6970, a.k.a. Cayman single GPU card is here. Of course, there are no guaranties that this card will be out on time, or that it will beat the GTX 580.

Overall feeling is that this is Fermi done right, unfortunately a year later than it was supposed to appear. If you are after a single GPU card, you don’t care much about your power bill and you can afford it, there’s no reason to overlook the Geforce GTX 580.