Index

Review: The 295+ beast is out

Our hardware testing always results in one winner, and this is surely a sad day for ATI since Nvidia takes the throne once again. These companies take pride in calling themselves the ones with the fastest card around, but we know that most of the dough they rake in comes from low-end and mainstream graphics cards. It’s a simple strategy – when a customer is to make a choice between two average low-end cards, they usually pick the company that has the fastest card in their offer. Nvidia is famous for being the fastest, and since they definitely insist on that more than ATI, they’re not planning on letting go. Well, GTX 295 is officially out today and it is set to dethrone ATI’s champion HD 4870 X2. Both cards in question are dual chip cards, so the time when single-chip cards held the throne is definitely a thing of the past.

Last year, we’ve seen the large GT200 chip ready to beat ATI’s RV770, the chip on ATI’s HD 4870. However, the optimism didn’t last long as dual chip RV770 card, named R700, managed to beat the GT280 (GT200 chip). Two small but high-clocked chips on HD 4870X2 (or R700) managed to beat the 65nm GT200, but Nvidia didn’t sit idle and transitioned to the 55nm process that resulted in cooler and cheaper processors. We have compliment Geforce GTX 295 as, although it’s a “sandwich design”, this card has unusually good thermal properties, not to mention the sheer muscle it packs under the hood.

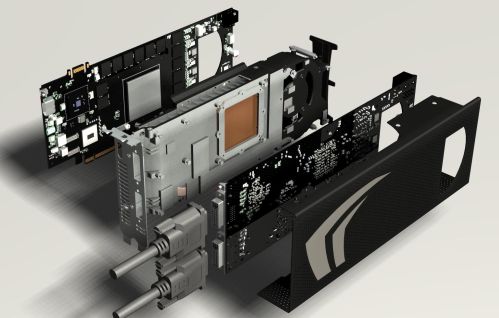

So, GTX 295 is a dual chip card where a large cooler is placed in the middle, sandwiched between the two PCBs. The GPUs communicate via the bridge, implemented with SLI technology. GTX 295 looks quite appealing and it’s not very different looks-wise from other dual slot, single PCB cards.

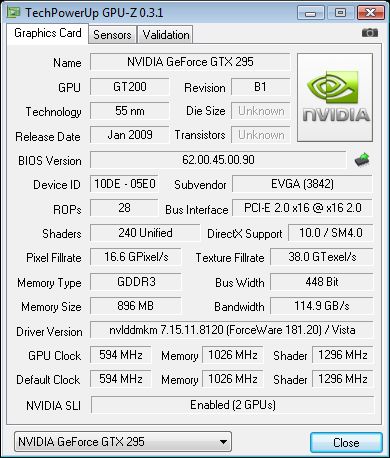

EVGA sent us their version of the GTX 295, named GTX 295+ which suggests that it’s an overclocked edition. Seeing these cards already overclocked on launch-day paints the picture of just how determined Nvidia is in taking the throne back.

Both chips on the reference card run at 576MHz for the core and 1242MHz for the shaders whereas the memory runs at 1998MHz. The card packs 1792MB of memory in total, meaning that each chip addresses 896MB of memory. Such odd memory numbers mean that the card has 448bit memory interface and that one of the chip clusters is disabled. The chips on this card are somewhere in between the specs on GTX 280 and GTX 260, where Geforce GTX 295 retained the clocks and the memory from GTX 260, and the shader processors from GTX 280.

EVGA GTX 295+ runs at 594MHz, which is an overlock of only 18MHz or 3%, but our tests show that any overclocking on these cards will add to the performance. Shaders are overclocked by 54MHz and the memory by 27MHz compared to the reference GTX 295.

GT200 chip supports the 512bit memory interface and 1024MB of memory, but it wasn’t utilized to the max on the GTX 295. This time around, each chip in the GTX 295 has 240 shaders just like we’ve seen on the GTX 280, and when you multiply it by two you’ll get a total of 480. All the texture units were used; 80 of them per chip to be exact.

Furthermore, each chip on the GTX 295 has seven ROP and framebuffer partitions. Each ROP partition contains 4 ROP units, resulting in total of 56 ROP units on the card. At the same time, each of the seven ROP partitions is linked to the main memory via a 64bit link, which explains the 448 bit memory interface. The total card’s bandwidth is 223.8GB/s, whereas the EVGA’s card ends up with a bandwidth of 229,8GB/s.

Each framebuffer partition is connected to 128MB of memory, totaling to 896MB of memory per graphics processor. That means that GTX 295 has a total of 1792MB of GDDR3 memory. The next gen Nvidia GPUs, now known as GT212, will use GDDR5 memory, and if you’re wondering as to why they haven’t done that already, the answer is that GT200 memory controller doesn’t feature GDDR5 support.

GTX 295’s cooler has a job of cooling the two GPUs, that aren’t quite cool during operation. In order to solve thermal issues, Nvidia designed a new cooler that improves cooling by 46% compared to Geforce 9800 GX2, the company claims. The new heatsink and the fan have what it takes to dissipate more than 289W.

The cores face inwards touching the sandwiched cooler. The fan blows air towards the I/O panel, pushing the hot air out of the case and the rest trough the vents on the top of the card. It’s quite important that your case has an adequate airflow, in order to get rid of the hot air still lingering within the case.

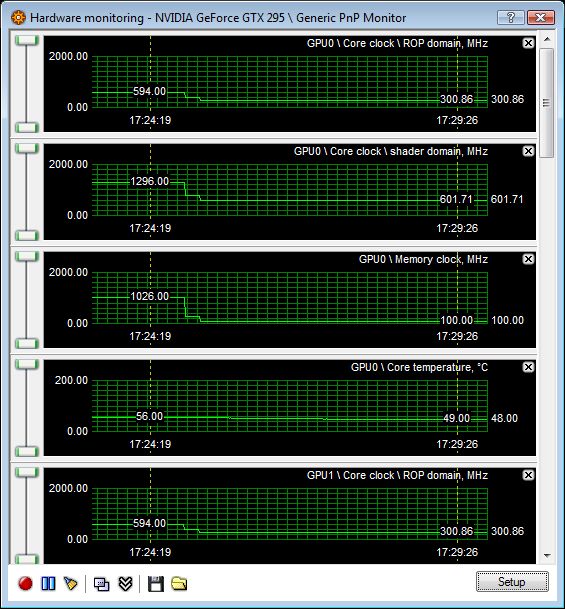

The GPU on the card will run normally until it hits 105 degrees Celsius, but upon crossing that threshold, the GPU will immediately downclock in order to save the chip. This is called throttling, but it’s highly unlikely that you’ll experience throttling with this cooler. The maximum temperature we measured during our gaming and testing was 85°C, which is better than HD 4870 X2 that goes over 92°C.

In order to lower the card’s consumption and temperatures when in idle mode, core and shader clocks are halved and the memory runs at mere 100MHz. Radeon HD 4870 X2 hits 64°C in idle mode, which is 16°C more than on GTX 295. The following picture is surely worth a thousand words, so see for yourself.

You might've noticed that the card comes in a black mesh-grill shell, that improves airflow. It also does a job of protecting the front of the card and it's made from metal that when touched, due to special Soft-touch paint, resembles rubber.

The PCB we see is almost identical to the one on the other side, the one not covered by the hood. The only difference is the SLI connector that's located on one side only and the HDMI and DVI outs that are located on different PCBs.

The card is of course PCIe 2.0 and it comes with two dual link DVIs, both supporting 2560x1600, and one HDMI port.

Maximum board power is listed at 289W, but our testing revealed that it consumes 20W less compared to HD 4870 X2. The card is powered via one 6 and one 8pin PCI-Express power connector, so we'd recommend a PSU rated at no less than 680W.

Just like all the newer Geforce cards, GTX 295 also comes with a SPDIF audio connector. EVGA also ships a cable you'll need to route sound from your motherboard/soundcard to the graphics card, resulting in HDMI cable carrying both the video and audio signal to your HD TV device.

This time around, Nvidia left enough space around the power connectors, unlike what we've seen on Geforce 9800 GX2 when we experienced problems when trying to connect the 8pin power connector. The gap was just too tight and Nvidia quickly blamed PSU manufacturers for not following standards.

EVGA tends to use same packaging on all cards, it's only the pictures that change, which isn't half bad if you ask us. It's nice and compact and we often find the fastest Nvidia's offerings inside, which alone is enough of a reason to grow fond of it.